The Gist

- AI conversations are training data by default. Most major AI platforms automatically use user interactions to improve their models unless the setting is manually disabled.

- Privacy controls are often buried in settings. Users must actively opt out of data collection in tools like ChatGPT, Claude and Gemini if they want to prevent their prompts and conversations from being used for model training.

- AI governance now affects customer trust. As employees and customers rely more on generative AI, organizations must understand how sensitive data flows through these systems and establish clear policies for safe use.

Editor's note: For customer experience leaders, this article is a reminder that trust is shaped not only by the experiences brands design, but also by the tools employees and customers increasingly rely on every day. As generative AI becomes more embedded in service, marketing and digital workflows, leaders need to understand the privacy tradeoffs hiding behind default settings — and recognize that governance, transparency and data stewardship now play a direct role in protecting customer trust.

Earlier this month, I was surprised to learn that the world's leading AI labs have granted themselves free rein to train on our conversations. I've since fixed the problem for my account, and you might want to as well.

Table of Contents

- What AI Labs Are Really Collecting by Default

- Why Customer Experience Leaders Should Pay Attention

- How to Opt-Out of LLMs and Training on Private Data

What AI Labs Are Really Collecting by Default

Amazon, Anthropic, Google, OpenAI, Meta, and Microsoft have all built default settings that allow them to train on anything you input into the chatbot window, from medical records to open-hearted confessions. Unless you toggle the setting off, you've granted them the right to access all your AI interactions.

Yikes, right?

Why Customer Experience Leaders Should Pay Attention

If you're mostly using the bots for rudimentary work, that's probably fine. But if you're inputting financial, medical or other personal information (I'm guilty of all the above), then it's less advisable. Not to mention, customer data.

"You're opted-in by default," Dr. Jennifer King, privacy and data policy fellow at Stanford's Institute for Human-Centered Artificial Intelligence, told me. "They are collecting all of your conversations."

Dr. King is the lead author of a viral paper that examined these companies' data collection processes last year. The paper, called "User Privacy and Large Language Models," highlights a privacy issue that will only become more urgent as people trust increasingly capable chatbots with more sensitive documents.

And given that these AI research outfits had exhausted almost all available data on the internet (and elsewhere), new data coming in via our conversations with the chatbots is particularly precious. "It's very valuable" said King. "The research today shows that if you keep retraining on AI-generated content, you end up with model collapse."

The AI model builders do install guardrails to ensure personal information isn't spit out by chatbots. And many strip identifying information out of training sets. But with the amount of data involved, it's a risky process to trust.

Related Article: AI Transparency and Ethics: Building Customer Trust in AI Systems

How to Opt-Out of LLMs and Training on Private Data

The chatbots' settings are somewhat hard to find and discern. So in the spirit of full disclosure, I'll lay it out here, at least for ChatGPT, Claude, and Gemini:

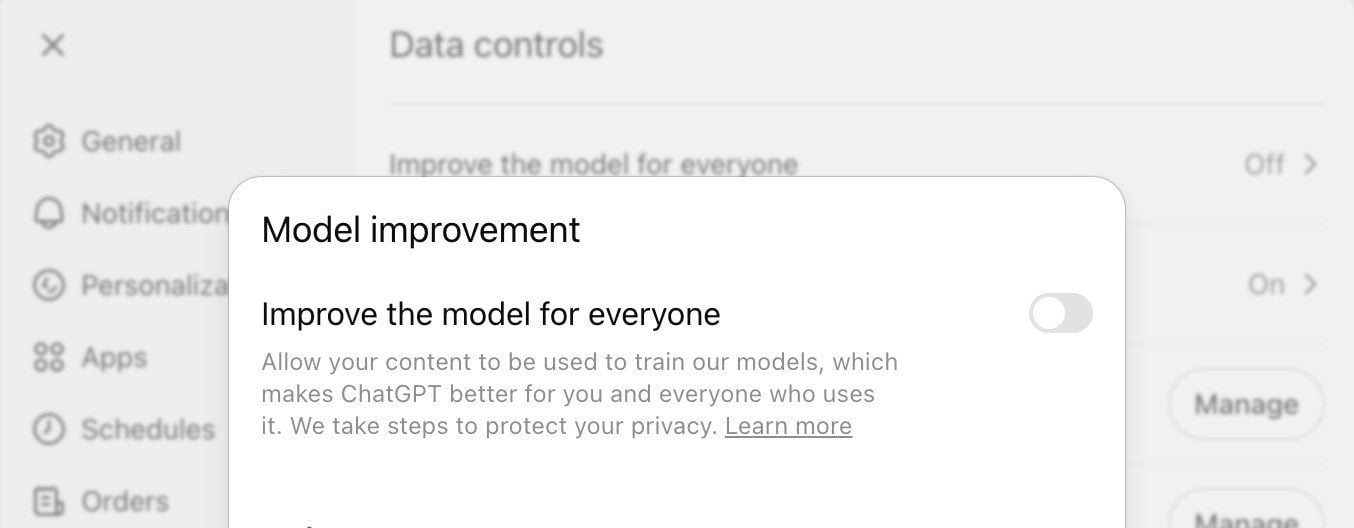

ChatGPT

Within the Data Controls section in ChatGPT's settings, the "Improve the model for everyone" opts your conversations in for training. Turn that off and the company no longer has your permission to train on your chats.

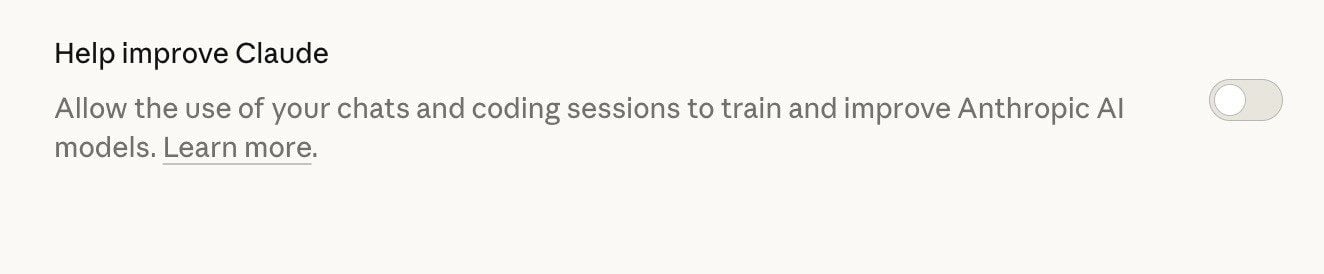

Claude

Inside Claude's Privacy section, the "Help Improve Claude" setting can be toggled off to remove your conversations from training.

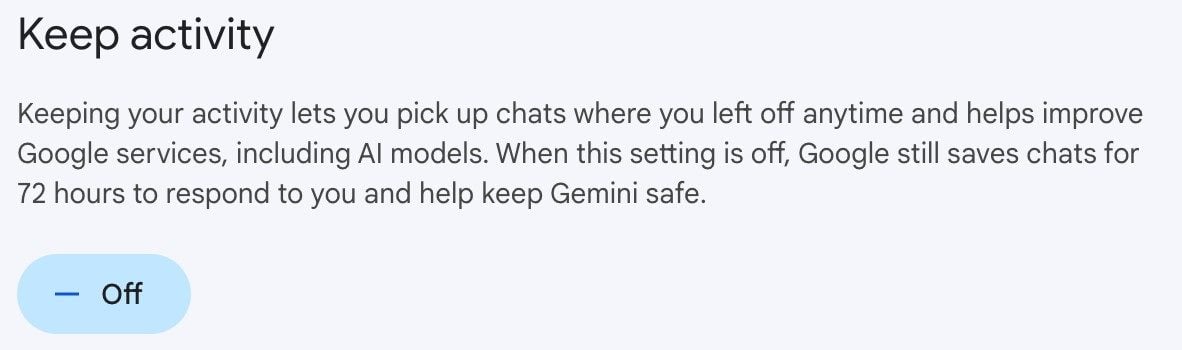

Gemini

And for Gemini, head to the Activity section within settings and turn that off to prevent training:

The squishy opt-out language is "obscuring what they're really doing by appealing to your social good, essentially," Dr. King said. "It frames it as a trade-off, that you're going to make this thing worse if you don't comply."

Now, at least you have the information. Go do with it what you will.

Learn how you can join our contributor community.