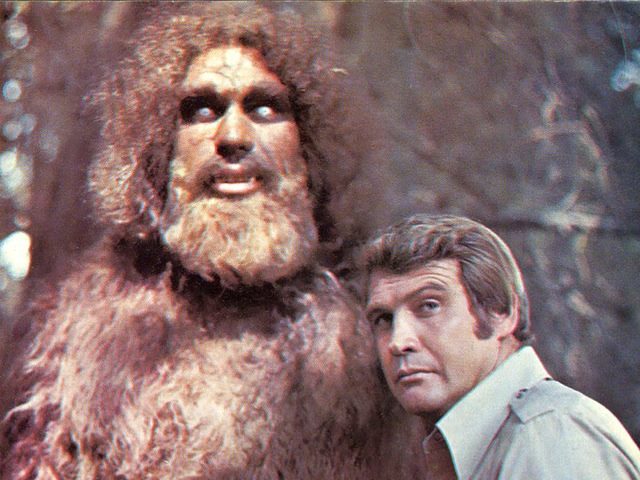

One of the most popular TV shows in the mid '70s was the Six Million Dollar Man, which told the story of astronaut Steve Austin, who after an accident was rebuilt as a superhuman cyborg, combining the best of the human mind and robotic enhancements. This "Better. Stronger. Faster." has become a foundational theme in describing the benefits of technology. Whether you prefer the wording of the 1970's version or the 2007 Daft Punk "Harder, Better, Faster, Stronger," the basic themes resonate with organizations that want to improve the value and power of their technologies.

Big data is no exception. Although we have used the three Vs of Volume, Variety and Velocity as a basis for defining big data for over a decade, the truth is that each of these Vs is solved through different technologies -- there is no one solution to solve all of these problems. This conflation of big data characteristics has only become confusing since the phrase "big data" truly took off in 2011.

Because of this confusion, it's easy to find users who will alternatively define big data in terms of petabytes or gigabytes depending on their need for performance, the types of data being analyzed and the fundamental need for speed in processing, analyzing and making data-driven decisions. Regardless of the scale of data that we currently consider big data, the one constant is that we should expect big data to grow by orders of magnitude over the next five years as biological structures, environmental data and the Internet of Things create additional primary data and metadata used to contextualize every interaction and transaction in our lives.

So rather than work with a three Vs approach that even Gartner believes is only a starting point to success, focus on big data in terms of actual technological value. Rather than looking at big data in terms of qualifying the data itself, let's focus on the Daft Punk or Six Million Dollar Man's view and figure out what makes big data "Better, Stronger, and Faster."

Better Big Data

To make big data better, we need to stop talking about how the quality of data matters less in a big data world. If quantity and repetition determined the value of data, we would probably assume that every Twitter utterance by Kim Kardashian and Justin Bieber would be more meaningful than the combined works of Shakespeare. Although some big data scientist of the future may look back at the 21st century and determine that this is the case, this finding would only prove that we as a culture had never solved the true challenges of big data.

We are only starting to get to a point where we are truly able to focus on the quality of big data. Wikipedia has over 70,000 active contributors to clean up its big data and to keep the environment clean over time. As an open community, Wikipedia has become the standard of showing how the quality and improvement of big data can actually occur.

This evolution is still only at its starting point. Pure programmatic automation efforts to make data "better" currently lack the nuance and contextual knowledge to result in improved recommendations. In truth, the vast majority of enterprise data is typically siloed or otherwise inaccessible to the employees, partners and customers who would actually be able to correct the problem. And we are only starting to see the launch of self-service and automated data quality tools that will give line-of-business employees the power to fix their own data with startup software from Paxata, Trifacta, Tamr, and the efforts of larger vendors such as IBM's Watson Analytics and Informatica's Springbok. Until we put the power of data quality into the hands of the masses, big data will struggle to become better.

Stronger Big Data

Another key issue with big data -- especially as it continues to outstrip the volumes of traditional data solutions -- is the challenge of maintaining its purity and context. This challenge ranges from the high level challenges of business continuity and disaster recovery to the most granular challenges of data corruption. It's handled by a combination of data scanning, file detection, data replication, data integration and data recovery. But in between all of these areas are gaps that prevent big data from being as strong and resilient as it needs to be.

Regardless of volume, velocity and variety, big data must be effectively stored, transferred, transformed and analyzed without threatening the original data. This means that companies must figure out how to bring their storage, transfer, recovery and scrubbing activities together into an integrated big data resiliency department.

One of the biggest challenges to these efforts is to synchronize internal enterprise data scrubbing and replication efforts with similar efforts conducted by managed cloud service vendors. Although the techniques, technologies and efforts may be similar in nature, even simple operational challenges such as matching the frequency and performance metrics across a hybrid environment can be difficult to manage. But as we think about the strength of big data, it is increasingly important to bridge the gaps between firmware error detection, data integrity, data scrubbing, data replication and data management.

Faster Big Data

The race to accelerate big data is most obvious in the financial services world, which has recently been uncovered to the masses through narratives such as Michael Lewis's "Flash Boys," which revealed the advantages created by having millisecond advantages in perceiving market interactions. In the world of high-frequency trading, there has always been a race to get faster data, whether this comes from hacks such as using high-performance video processing chips to conduct transactions more quickly or by purchasing extreme low-latency messaging from vendors such as IBM WebSphere, TIBCO Low Latency Messaging, Informatica Ultra Messaging or Solace Systems.

Use cases like this reflect only the beginning of the need for faster big data. One of my predictions is that sports science is about to provide the enterprise with the biggest data lesson since Moneyball in the near future. All of the top professional sports leagues across baseball, basketball, football (both real and American) and hockey have now implemented some form of camera-based movement detection and embedded sensors within uniforms either on an experimental or formal basis.

This means that we now have several laboratory experiments in closed, rule-based environments to use these cameras and sensors to analyze teamwork in very specific ways. As a result, these leagues will probably end up collecting a fair amount of data, perhaps 50 - 100 megabytes of relevant data or even more.

And then the challenge of faster big data will come into place. In the duration of a 30 second break, coaches will want to access all of that information, get a recommendation and transform it into an in-game adjustment. Because the data recommendation will only be a fraction of the work that the coach needs to do during a timeout, the real issue will be in providing an intelligent recommendation that is visually understandable, actionable and demonstrably superior to human judgment within only a few seconds. This challenge is formidable enough that professional sports will probably take several years to crack this code.

Once sports teams start using the "Internet of Sensor Things" to make in-game decisions, we will enter a new world of teamwork-based analyses that will enter the enterprise. With this level of insight, we will start to make process-based changes based on millions of interactions that fit our very specialized business challenges without having to wait for weeks, months, or even years on team and culture-based tests and demos that currently cannot be simulated. At this point, technology and culture will truly start to blend together to improve strategic corporate initiatives such as team optimization, cultural fit and succession management.

Faster big data isn't just about creating a big data world where data flows faster from a technical perspective. The big picture for faster big data is a world where data processing and visualization allow us to integrate technology, culture and strategy into a cohesive world. When that occurs, we will start to rebuild the enterprise with the promises of big data. We have the technology, but we are still working on the implementation.

The most advanced companies on the planet right now are those that use their developers to grow at scale, such as Facebook and Google. But the next level of scale will come from the effective and real time use of big data to effectively make decisions throughout the organization. The real question will be in seeing which company will be the first to become a truly bionic in its use of big data: Better. Stronger. Faster. Who will be that company?

Title image by James Vaughn (Flickr) via a CC BY NC-SA-2.0 license