The Gist

- Agentic AI validation. Cyara introduces tools for testing AI agents in CX.

- Governance enhancements. New modules address compliance and bias in AI-driven interactions.

- Enterprise assurance need. Business leaders gain confidence to scale AI while managing risk and quality.

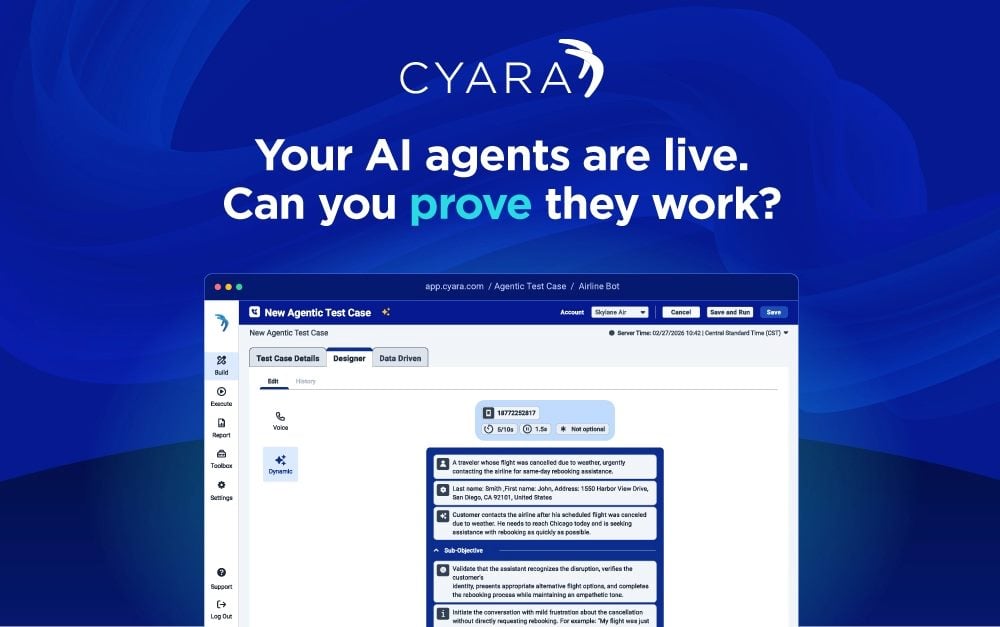

Cyara announced on March 31 new agentic testing and AI governance capabilities to help enterprises validate, monitor and control AI agents across voice and digital channels. The Austin-based company released three additions to its platform: Agentic AI Testing for Voice & interactive voice response (IVR), expanded AI Trust modules for compliance and bias detection and a recommendation engine for agentic customer experience (CX).

The launch comes as enterprises accelerate AI agent deployments but face a trust gap. Gartner predicted in March 2025 that agentic AI will autonomously solve 80% of common customer service interactions by 2029, yet a Cyara survey found 73% of consumers still say human agents resolve issues faster than AI. According to Cyara, the new capabilities aim to close that gap by providing assurance that matches the level of autonomy enterprises grant their AI agents.

Table of Contents

- Agentic AI Meets Conversational AI

- The Voice and IVR Testing Piece

- The Governance Expansion

- The Prompt Engineering Layer

- AI in Contact Centers Accelerates, Like Customer Skepticism

- Cyara Agentic AI Capability Breakdown

- Cyara Pushes AI Deeper Into the CX Stack

- How Agentic AI Testing Enables Reliable Contact Center Automation

Agentic AI Meets Conversational AI

Agentic AI introduces an entirely new risk profile, and Cyara is what allows organizations to control it without compromising speed or innovation. As we begin to see the introduction of agentic AI capabilities alongside existing conversational AI systems, customers need a way to validate both deterministic and autonomous behaviors within a single QA framework.

- Sean Rabago, senior service expert and capability lead

Kenway Consulting

The Voice and IVR Testing Piece

The agentic AI testing for voice and IVR is designed to validate AI agents using AI agents — essentially testing autonomous systems with autonomous systems rather than static scripts. The company says this approach catches failures and regressions that conventional script-based testing misses. The capability supports both traditional deterministic IVR flows and newer dynamic, AI-driven journeys within a single testing framework.

The Governance Expansion

Cyara's AI Trust suite — its existing testing layer for generative AI-powered CX — has added two new modules: Compliance and Bias. The Compliance module is designed to flag regulatory risks in AI-driven customer interactions before they reach customers. The Bias module targets outcomes that could adversely affect customers or brand trust. These expand AI Trust's existing coverage of accuracy, misuse and compliance validation.

The Prompt Engineering Layer

Cyara also launched a recommendation engine aimed at QA teams who may not have AI or prompt engineering backgrounds. The engine helps users generate prompting strategies for agentic and hybrid tests, combine scripted and agentic approaches in test case design and improve test coverage — the idea being to lower the technical barrier to agentic CX testing without requiring specialized AI expertise.

AI in Contact Centers Accelerates, Like Customer Skepticism

Cyara is positioning this launch around a tension it says exists in enterprise AI deployments: organizations are moving fast to deploy AI agents in contact centers, but customers remain skeptical. The company cited a Gartner projection that agentic AI will autonomously resolve 80% of common customer service interactions by 2029, alongside survey data indicating 73% of consumers still believe human agents resolve issues faster than AI.

The pitch is that validation and governance infrastructure — not just deployment speed — determines whether AI actually closes that gap.

Cyara Agentic AI Capability Breakdown

All three capabilities became available today:

| Capability | Description |

|---|---|

| Agentic AI Testing for Voice & IVR | Uses AI agents to test AI agents, detecting failures before deployment |

| AI Trust: Compliance Module | Identifies regulatory risks in AI-driven customer interactions |

| AI Trust: Bias Module | Detects bias that could affect customer outcomes and trust |

| Recommendation Engine for Agentic CX | Assists teams in prompt design and test development without AI expertise |

Cyara Pushes AI Deeper Into the CX Stack

Cyara launched its next-generation AI-powered CX assurance platform in July 2025, consolidating AI validation, real-time monitoring and no-code test automation into a unified enterprise solution. Key capabilities include Pulse 360 (AI-driven monitoring across 400+ carriers in 140+ countries), AI Trust (a testing suite to detect AI hallucinations and brand-damaging behavior) and Agent Assist Assurance (which validates real-time AI guidance tools for contact center agents).

Founded in 2006 in Melbourne, Australia, Cyara serves large enterprises with complex contact center environments, particularly in regulated sectors such as financial services, telecommunications and healthcare. The company secured growth investment exceeding $350 million from K1 Investment Management. In December 2025, Cyara appointed Sushil Kumar as CEO, succeeding Rishi Rana.

How Agentic AI Testing Enables Reliable Contact Center Automation

Rigorous testing and governance separate successful agentic AI deployments from costly customer-facing failures. Organizations deploying generative AI-enabled customer service agents saw a 14% increase in issue resolution per hour and a 9% reduction in handling time.

Voice & IVR Agent Validation

Unlike traditional chatbots that follow scripted responses, agentic AI systems understand context, reason through problems and take independent actions to resolve customer issues. This autonomy requires validation across:

- Autonomous agents handling complex inquiries end-to-end

- Intelligent call routing based on customer intent and agent capabilities

- Real-time coaching and assistance for human agents

- Automated resolution of routine tasks like account updates and order processing

Governance for Compliance & Bias Detection

Governed AI agents constrain large language models with business logic and human-in-the-loop oversight. This ensures deterministic outcomes in regulated environments while maintaining compliance under frameworks like the EU AI Act.

Clear escalation paths to human agents remain essential in regulated sectors where effective handoffs minimize compliance risks.

Have a tip to share with our editorial team? Drop us a line: