The Gist

- Real CX work happens below the keynote level. Beyond big AI promises, practitioners focus on operational details like automation, standardization and feedback loops that actually improve experiences.

- Reducing friction beats adding more technology. From automated notes to survey standardization, both leaders prioritize removing operational noise over layering on complexity.

- AI succeeds when scoped and disciplined. Chime applies AI in low-risk, high-confidence scenarios with strict guardrails, avoiding the trap of over-automation.

- Listening systems must be built, not assumed. The PGA Tour’s Voice of the Gallery and Fan Council show how structured feedback enables consistent, actionable insights at scale.

- Incremental progress outperforms transformation hype. Both organizations favor small, targeted improvements over sweeping change, focusing on solving real problems rather than chasing innovation narratives.

Editor's note: This article's featured subjects in the video above chime in starting at 12:24.

SEATTLE — How many angles of the AI and customer experience conversation can there be? Quite a few, we've found.

Sweeping transformation narratives. Bold vendor promises. Keynote-ready statistics.

And then there is the version that happens in the hallways and breakout rooms of a conference like Qualtrics X4, here this week at the Seattle Convention Center, where the people actually doing the work talk about automated notes, confidence thresholds and whether the latest post-event survey has the right standardization baked in.

Where Real CX Work Actually Happens

I caught up with two practitioners who live firmly in the second category:

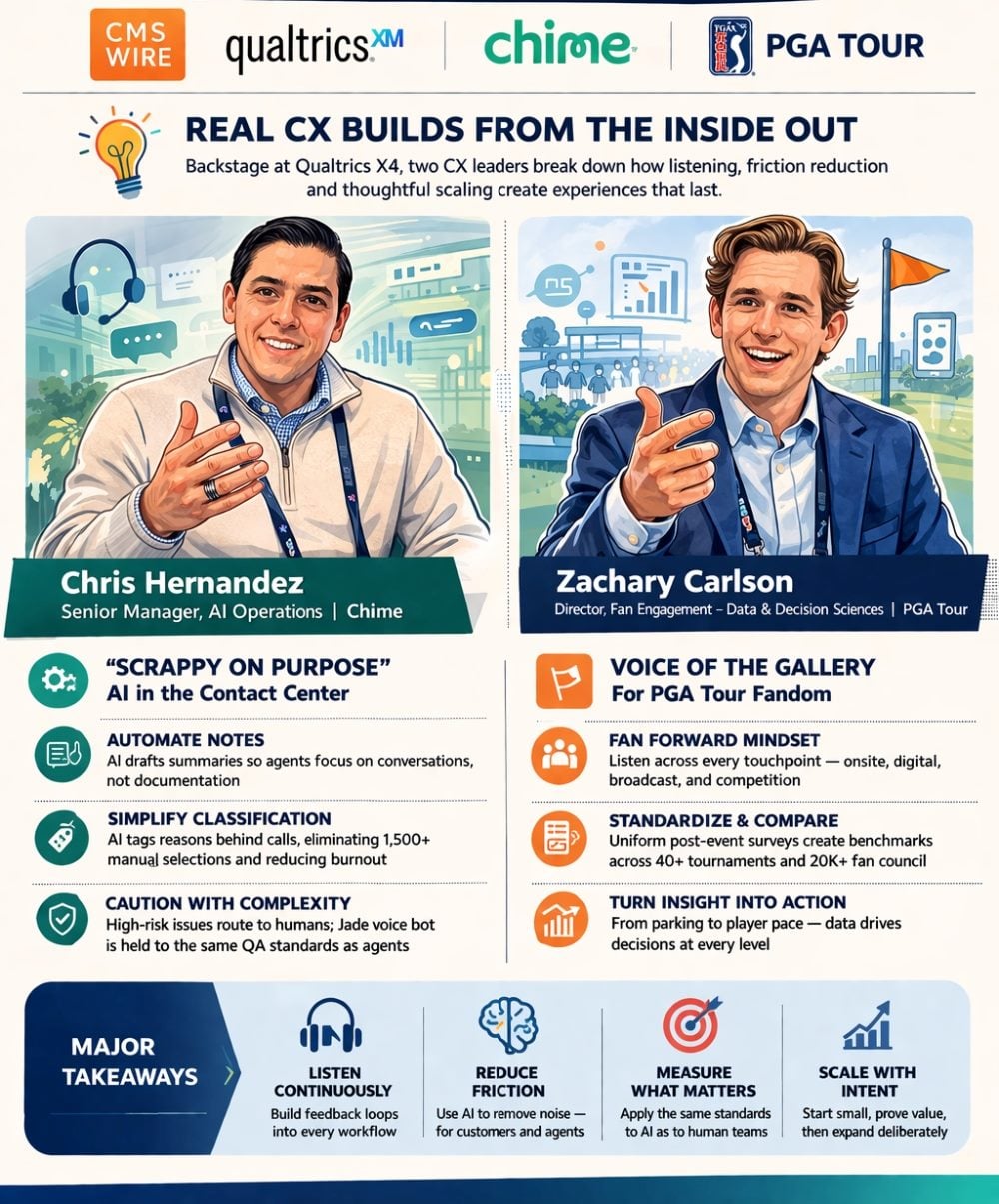

Chris Hernandez is senior manager of AI Operations at Chime, where he leads a small, specialized team embedded inside the contact center operation.

Zachary Carlson is director of Fan Engagement — Data and Decision Sciences at the PGA Tour, where he oversees a fan research function spanning digital properties, on-site events and a community of over 20,000 engaged fans who provide direct feedback on experiences.

Their industries could hardly be more different. But the principles guiding their work — build deliberately, listen continuously and resist the urge to overcomplicate — are strikingly aligned.

Table of Contents

- Scrappy on Purpose: The Chime Approach to AI Operations

- The PGA Tour's Listening Infrastructure

- The Shared CX Discipline: Solve the Right Problems

Scrappy on Purpose: The Chime Approach to AI Operations

Hernandez did not come up through a traditional technology track. He started as a call center agent — an experience that gave him a ground-level view of everything that wasn't working.

"I quickly saw, like, man, there's a lot of things that could be changed in this industry, and there's a lot of manual processes," he told CMSWire. "And that also inspired this curiosity of fixing waste and removing waste from business. And that Lean Six Sigma approach was very interesting. And so when you apply that plus technology, it was like, 'Oh man, we could do both really, really easily.'"

That combination of operational discipline and technical curiosity now defines the team he leads inside Chime's OMX — Operations Member Experience — organization. His group is, in his words, "a unicorn" within the broader operation: the most technical unit in a function built around running the business, responsible for building and maintaining the AI-powered tools that agents and contact center infrastructure rely on.

The scope is deliberately narrow. Hernandez's team focuses on customer-facing AI — not employee-side governance or internal tooling. Within that lane, the emphasis is on reducing what he calls "cognitive load": the accumulated mental burden that makes contact center work so punishing at scale.

Related Article: The CX Reckoning of 2025: Why Agent Experience Decided What Worked

Reducing Agent Friction With Targeted AI

Two signature initiatives address this directly. The first is automated notes: an AI model that removes manual note-taking from agents so they can stay focused on the interaction itself. The second is automated contact reason classification — eliminating the need for agents to tag calls from an overwhelming real-time menu. Hernandez described what that used to look like:

"Being on a call and trying to select from 1,500 contact reasons while you're on the call, wrapping up, plus you have your average handle time and all these metrics that you're trying to get down, and it just becomes more noise to the agents."

The relief those changes produced was immediate and tangible. "Even those little wins, like automated contact, you never have to classify your case anymore," he said. "And those are the sighs of relief that I hear throughout the BPO. And I think we're slowly chipping at it. ... We're not throwing AI at every solution."

That measured, incremental approach extends to how the team thinks about AI deployment more broadly. Chime uses a voice AI named Jade — intentionally named, Hernandez noted, to make the interaction feel as human as possible. But Jade is not positioned as a universal handler. The team has built conversational AI design guidelines and guardrails that route interactions appropriately, with a particular emphasis on removing friction at the handoff point.

"The idea of having to press '0' 5,000 times and scream agent 100 times to get an agent — that's just causing friction," he said. "If it's a topic that we know we shouldn't talk about, and the customer wants to talk, get them to an agent, to a live human. There's no need to create that friction point."

Measurement holds Jade to the same standards as human agents. Hernandez described a QA scorecard equivalency: whatever BPO agents are held accountable for, Jade is measured against the same outputs. "The standards don't go away because it's AI," he said. "If anything, it's multiplied."

The standards don't go away because it's AI. If anything, it's multiplied.

- [Chris Herandez, senior manager, AI Operations]

Chime

Our 'First Line of Defense': Customer Sentiment and Feedback Data

Sentiment and feedback data runs through a combination of Qualtrics speech analytics and text analytics tools — what Hernandez called the team's "first line of defense" in understanding how members experience AI-handled interactions. He acknowledged the measurement challenge honestly: customers tend to express frustration with AI once they reach a live agent, not during the automated interaction itself.

Operating in a highly regulated fintech environment also means the team is conservative about where AI takes the lead. Low-risk, high-confidence interactions are where automation thrives; anything touching regulatory complexity is where human agents stay in the loop.

At his X4 session, one of the most-discussed topics was using large language models to set confidence levels on high-risk interactions — letting a model serve as a judge for when alerts should fire. His advice to the room cut against the industry's tendency toward overengineering: "I don't think any of that is still required, because you could be a little scrappy in certain instances."

The PGA Tour's Listening Infrastructure

Zachary Carlson's challenge looks quite different on the surface, but the underlying question is the same: how do you build a systematic, scalable way to understand what your audience actually wants — and then act on it?

At the PGA Tour, that question comes against the backdrop of the organization's structure. The Tour does not own and operate most of its events. The four major championships are run by entirely separate organizations. Even within the Tour's own event ecosystem, only a handful of tournaments are fully owned and operated.

Standardizing Feedback Across a Fragmented Experience

Just this week, his team rolled out a Voice of the Gallery program with Qualtrics — a standardized post-event survey framework measuring the consistent elements of the fan experience across every event: merchandise, food and beverage, hospitality, parking. The goal is not just data collection but creating a basis for comparison — and eventually, competition among events.

"Being able to measure against a real comparison and let tournaments see how well they're doing in these aspects that are consistent to the entire fan experience — we see that as a huge tool to empower them to make the events better," Carlson told CMSWire.

Voice of the Gallery sits under a broader strategic umbrella Carlson calls Fan Forward — an initiative launched last year that he described less as a single program and more as an organizing philosophy. The idea: listen to what fans want, make decisions around that feedback, and trust that the business outcomes follow. The research base now runs to tens of thousands of responses, potentially over 100,000.

Fan Forward breaks down into four work streams: on-site experience, broadcast, player content and profiles and competition. Carlson described the inspiration: Major League Baseball's pitch clock and rule changes, which were researched, tested and then measured for impact. The PGA Tour, though, has to think differently about change.

"We have hundreds of golf balls in the air at any given time," he said. Speed of play — a perennial fan pain point — illustrates the complexity. Rather than prescribing a solution, Carlson framed the research question honestly: "What's the remedy for that? Are there competition adjustments that you can make, or is it potentially how it's presented when the fan sees it on broadcast or streaming, and if you don't see someone take a really long time to hit a golf ball, did it matter?"

Fan Forward Feedback Drives Decision-Making

One area where Fan Forward feedback has translated directly into on-site action: in-event logistics. At owned and operated events, teams work in real time with in-the-moment feedback. "We have teams standing by that are getting in the moment feedback about things that need to be addressed on site," Carlson said, "whether it's more food in a hospitality area, an issue with the restrooms, an issue with things not being available in the merchandise tent."

The PGA Tour does not have a traditional contact center. Instead, the organization built the PGA Tour Fan Council — now over 20,000 members — as a direct channel between fans and the organization. It functions as both a research panel and an open feedback mechanism.

"That is the place where you can have your voice heard by the PGA Tour," Carlson said, "and then on us to deliver that."

Carlson also touched on the employee experience side, where his team helped stand up an engagement measurement program during the Tour's significant organizational transition — from a nonprofit structure to PGA Tour Enterprises. He saw the parallels clearly: "Listen, implement, measure, rinse, repeat. That's applicable to both sides of experience."

Related Article: The Employee-Customer Experience Tango: Are You in Sync?

The Shared CX Discipline: Solve the Right Problems

A few themes emerge from both conversations that are worth naming for any CX practitioner trying to translate conference energy into Monday-morning reality.

Neither Hernandez nor Carlson is chasing comprehensive solutions. Hernandez warned explicitly against throwing AI at every problem — the fix might be a process or a people issue, not a technology one. Carlson described Fan Forward as hundreds of small initiatives rather than one grand transformation. The discipline in both cases is knowing what problem you're actually solving.

Both are also deeply attentive to friction — not just in the customer experience itself, but in the operational systems that generate it. The automated notes project at Chime is a story about what happens when agents are asked to manage too many competing tasks on a live call. The Voice of the Gallery standardization effort at the PGA Tour is not primarily a data story; it's about creating conditions in which tournament operators can actually act on what they learn.

And both are appropriately cautious about scale. Hernandez described Chime's requirement for a thorough end-to-end evaluation of any new technology — covering data handling, model behavior, privacy and governance — before any partnership moves forward. Carlson is building comparison frameworks with deliberate care for context: event size, market size, timing, cohort groupings.

Qualtrics President of Product and Engineering Brad Anderson framed the opportunity in terms that both Hernandez and Carlson would recognize: the data is already there, it just needs to be heard.

"If I compare a survey, an average survey has 15 questions, three open-ended questions, and I compare it to an average call with a call center, which is seven minutes long," Anderson said, "I get 80 times the number of sentences coming in from the call center that I do from the survey. So now the amount of data that you have to be able to understand the context and to be able to understand exactly what the individual is feeling and what they want to do, that's why it's such a goldmine, because you get so much more data to be able to learn from."