Integration is a word you hear pitched from a variety of IT service providers and product vendors. It’s one of those lofty goals to be achieved through concerted effort, like collaboration, alignment and democracy.

And yet you may be left with this question: What is it?

You think you have a handle on it — or that it’s as simple a concept as making all the software in your organization work seamlessly together — so that people can work with it just as seamlessly.

Then you tally up the angles, the impacts and the target zones of all those multiple pitches, and you may be left wondering why you’re more confused now than you were.

There are many different components to the job of software integration. Some vendors specialize in one or two of those components, and thus they tend to portray the concept from their native perspectives.

A thorough evaluation of the complete job of integration in your enterprise requires a polling of several vendors and service providers who have a stake in that job, whatever that stake may be, followed by an effort to connect the dots to form a more cohesive image in your mind. With this CMSWire Discussion Point, we’ve begun those first two tasks on your behalf.

Specifically, we asked some companies with names you may or may not know, who each have different stakes in the field of integration, their take on the issue.

Intentionally, we asked an open-ended question. The differences in vendors’ responses may yield important clues as to how they perceive the proverbial elephant in the room differently, and what end of the elephant they’re on.

You’ll notice right away, though, that there are (at least) two sides to this issue. We’ll hear from one source that asserts that integration is something a vendor should provide for you, and another that insists that the people in your IT department are already on the case, assuming they want to keep their jobs.

The Question

How should today’s software be made interoperable with yesterday’s, and who should do the work?

The Answers

Jim Scott, Director of Enterprise Strategy & Architecture, MapR

Systems integration is a tricky topic, because systems aren’t always built with the notion of playing nicely with others. Systems built to support yesterday’s business are a part of our legacy and need to be treated as such. Each opportunity to solve tomorrow’s business problems is an opportunity to reconsider the inclusion of legacy systems, for better or worse.

The responsibility for interoperability falls squarely on the shoulders of the vendor building the product.

Some people may think that is a bit bold as it may imply that each vendor is accountable for ensuring interoperability with every other system. That is exactly the point. Standards exist for a reason, and if a standard exists, it should be in every vendor’s best interest to support standards for interoperability.

The better the support for platform interoperability, the stronger the competitive advantage in the marketplace. Interoperability makes it easier for customers to integrate a product into how they run their business. Enabling customers to succeed is the most important factor.

As an example, if I create a new file system and I want someone to buy it from me, the best thing I could do would be to ensure that it works exactly as all other file systems and adheres to the defined set of standards (e.g., POSIX, NFS). And it comes down to more than just an API; it semantically must act the same for opening, closing, and randomly reading / writing files. These standards have been in place for the last 30+ years.

Taking one step beyond, if I were storing data for my enterprise application, I would want to ensure that data can be persisted in a format that allows simplified portability by the consumer. Leveraging a standard data format like JSON would be a huge benefit, and it makes “future-proofing” that much easier. This helps to reduce consumers’ risk, and puts them in control of how to solve their problems.

With all of the great tools in the big data space that can perform distributed computation, what stops everyone from using them? The answer is, integration with existing systems. Customers want tools to work in their environment without the need to reinvent all the wheels (connectors) themselves.

Apache Drill is an excellent example, and a solution to this problem. It was built to plug into multiple data sources and to read a variety of different file formats, and to enable users to interact with it via standards: ODBC and JDBC (well-defined standards), and ANSI:SQL 2003. People use Drill because it easily integrates with products built for yesterday, while supporting the business needs of tomorrow.

From Scott’s perspective, the purpose of data is to be available, not to be secluded or segregated or compartmentalized. Integration, as MapR’s customers portray it to him, is the act of making data available to all sources that can make use of it.

Not everyone will agree with Scott’s contention that it is entirely the vendor’s responsibility to make data ubiquitously available. Other vendors will make the case that enterprise IT departments should have carefully constructed, unique integration plans, and that their continuous integration (CI/CD) processes should align with those plans.

Avi Cavale, Co-Founder & CEO, Shippable

Applications tend to live a lot longer than we estimate. Over time, they get hacked to do things they are not intended for, mutating into black box monoliths.

During my Microsoft tenure in 2006, I developed a back-office platform for Microsoft Office 365. Our core IT back-office was a mishmash of applications developed in the ‘90s. It was built to handle a few large transactions, and not the volume of a SaaS business. Our new platform had to interface with this legacy mishmash. Sales transactions, revenue recognition, business reporting, etc., all had to somehow end up here.

I used a three-step approach to achieve integration:

- Build a shim API layer that fronts all legacy applications that you care about. The API layer has to support modern protocols and, in our case, a REST-based HTTP interface.

- Create an enterprise service bus (ESB) that talks to the shim API layer. Integrate your legacy workflows through the ESB. This provides you with abstraction, guaranteed message delivery and loose coupling.

- Identify isolated and self-contained pieces of functionality in the legacy systems. They are candidates for microservices, if you want to modernize the architecture. They will continue to sit behind the ESB and leverage the benefits of a decoupled architecture.

This three-step approach is both cost effective and predictable. You can modernize any IT infrastructure. The issue now is, who is going to do it?

And the more pressing question is, who pays for it?

My short answer is, it depends. If an IT organization is trying to modernize itself and stay relevant to its customers, IT will do it.

In my case, the Microsoft Office 365 product team needed this more than IT wanted it. My team ended up both paying for it as well as implementing it, with a few key IT resources as consultants. This was only possible with air cover from the Office 365 executive leadership team. Without that, “Modernizing IT” was a non-starter.

Cavale’s terminology here is not so foreign that you can’t picture the gist of what he’s talking about. Even when an organization is working to wean itself off of old software, it needs to implement some mechanism that enables it to communicate.

This is the key problem: Old software (usually circa 1996 or earlier) was not designed for a network, thus it wasn’t designed to communicate. It knows how to save data to a file, and Cavale’s shim would be one way to make such a file into a kind of communications proxy.

Guido Appenzeller, Chief Technology Strategy Officer, VMware

Software is changing from a traditional vertically integrated Web/application/database model to a more modern architecture around third-generation applications that are composed of microservices. At the same time, the infrastructure that this software runs on is moving from manually provisioned data centers to highly automated public and private clouds often with a PaaS layer on top. Last, but not least, software that is not related to the core competency of organizations no longer runs on their own infrastructure but is consumed as SaaS.

Software interoperability happens at multiple levels. At the infrastructure level, the focus is on taking legacy applications and packaging them in a way that they can easily run on one common platform with tomorrows applications. For example, this requires technology that providing management, storage, connectivity and security for both classic VM as well as modern, container-based environments. Often inserting services is necessary to compensate for the lack of built-in security features. At the application level, legacy interfaces need to be converted to modern REST or similar APIs, and wrapped with the necessary level of authentication and access control.

In my experience, it’s often the new application teams that are best in defining requirements, and often it is the team sustaining the old applications that is best suited to do the work.

VMware’s principal product is virtualization, which from a more philosophical-sounding point of view is a kind of abstraction layer. The company sells a way to abstract the connections between software, so naturally Appenzeller casts the topic of integration as taking place on multiple levels.

Notice what happens as a result: In the optimum environment as envisioned by VMware, not even the integration job takes place in a centralized fashion. Appenzeller suggests that it’s best to delegate two aspects of integration to separate teams, with modern developers (VMware’s core market) drafting the specifications, and IT professionals (the “sustainers”) providing the muscle.

From MapR’s Scott, and Shippable’s Cavale and VMware’s Appenzeller, you get the impression that there’s no automatic path to the goal of integration, however that goal might be expressed.

For More Information:

- DX Digest: Mark Grannan on Digital Experience Integration

- You’re Going to Integrate Everything: Here’s 3 Things You Need to Know

- Social Will Not Succeed Without Business Process Integration

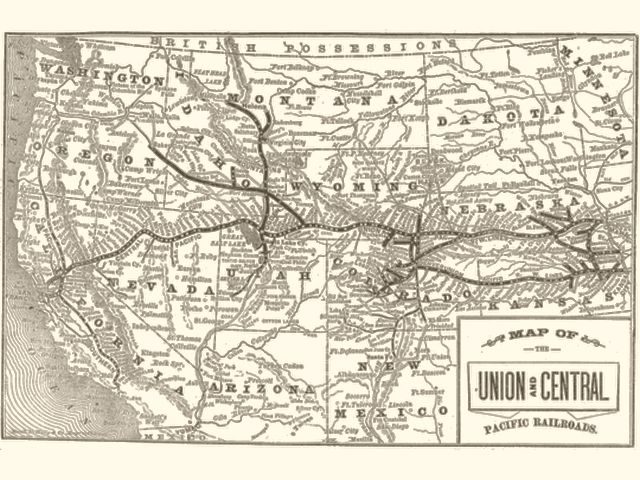

Title image Map of the Union and Central Railroad circa 1865, in the public domain, from Wikispaces