Roger Penrose’s 1989 book, "The Emperor’s New Mind," argued that true intelligence in computers was a physical impossibility. While most of the reasoning in the book has since been refuted, many basic questions remain unanswered.

At the time of the book’s publication, neural networks were small and symbolic AI algorithms were explainable. In the intervening decades, however, the ten-thousand-fold increase in CPU power has led to deep learning, along with a corresponding decrease in our understanding of what is going on inside our AI systems.

What AI Transparency Truly Reveals

AI transparency is an effort to explain what is going on inside an AI system which would otherwise have to be regarded as a “black box.” As a result, a trained, deep learning system may make numerous correct predictions interspersed with a few outlandish ones.

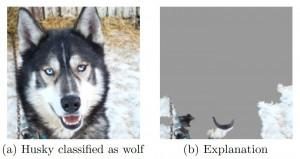

Transparency efforts attempt to explain the reasons for errors so that the system can be improved. Rather than exploring inside a deep learning system, however, transparency methods focus on the few erroneous cases and then make small modifications to the input pattern to see which facets of the input actually impact the output. In this way, we might learn that the reason an AI system misidentifies a dog in a picture as a wolf is because there is snow in the image. We can assume that in the training set, many of the pictures of wolves also contained snow.

At a more fundamental level, we are learning from improved transparency that many of our AIs don’t work in the way we thought they did. We are learning that deep learning systems have a tremendous ability to find commonalities and correlations in huge data sets — an extremely useful and powerful ability — but they possess no human-like understanding whatsoever.

The problem is not in our algorithms, but in their names. By calling things “Artificial Intelligence” or “Deep Learning,” we are using words loaded with meanings and implications which, in fact, don’t exist. Learning implies understanding and intelligence implies reason, neither of which form any part of today’s AI mainstream. If we were to employ terms such as “Advanced Statistical Analysis” and “Deep Correlation” instead, we would shift our attitudes about our current algorithms and their foibles. An algorithm by any other name would be more useful.

Related Article: Is There a Clear Path to General AI?

What to Expect From AI in the Decade Ahead

This situation is likely to change in the coming decade, as we overcome many of the limitations of today’s AI. To provide an example, consider a 3-year-old playing with blocks. The child knows that physical objects are generally permanent and exist in a physical reality. The child also knows that blocks don’t fall through each other because they are solid, and that round ones roll while square ones do not. He or she knows that a stack of blocks must be built before it can fall down, and so understands the passage of time. Moreover, the child can learn any language that is heard and can use it to describe the surroundings. Today’s AI, typically, does none of these things, but there is no reason to think it will not in the future.

The 3-year-old has a few advantages over any AI. The child gets multi-sensory input and can manipulate objects. That means he or she knows that a block is more than just its appearance or the words used to describe it. The child has an internal mental model of her surroundings, so knows that the blocks still exist even if and when they can’t be seen or touched. The child can use this mental model to imagine and plan. He or she also has the equivalent of a Universal Knowledge Store which can create links relating all types on input. With this ability, everything the child learns is placed in the context of everything else learned previously, creating a basis for understanding.

We can say, categorically, that today’s AI doesn’t understand, because we can observe that it contains no equivalent of the 3-year-old’s Universal Knowledge Store. We can say it is not truly intelligent, because it has no ability to plan since there is no internal mental model.

As these features are added to our AIs in the coming decade, we are likely to see a shift so that a future AI will be, arguably, intelligent and understanding. Along with more humanlike understanding, we will see a shift in AI transparency. We will be able to ask, ”Why did you make such-and-such decision?” and get an answer, “Because such-and-such.”

It is important to recognize, though, that this will be a humanlike explanation and, in a humanlike way, may be a rationalization which may or may not represent the true reasons. The difference is that we will be able to see inside the Knowledge Store and Internal Model in order to know which items actually contributed to the decision and truly understand what makes our future AIs think.

Related Article: AI and the Year Ahead: What Now?

Learn how you can join our contributor community.