The Gist

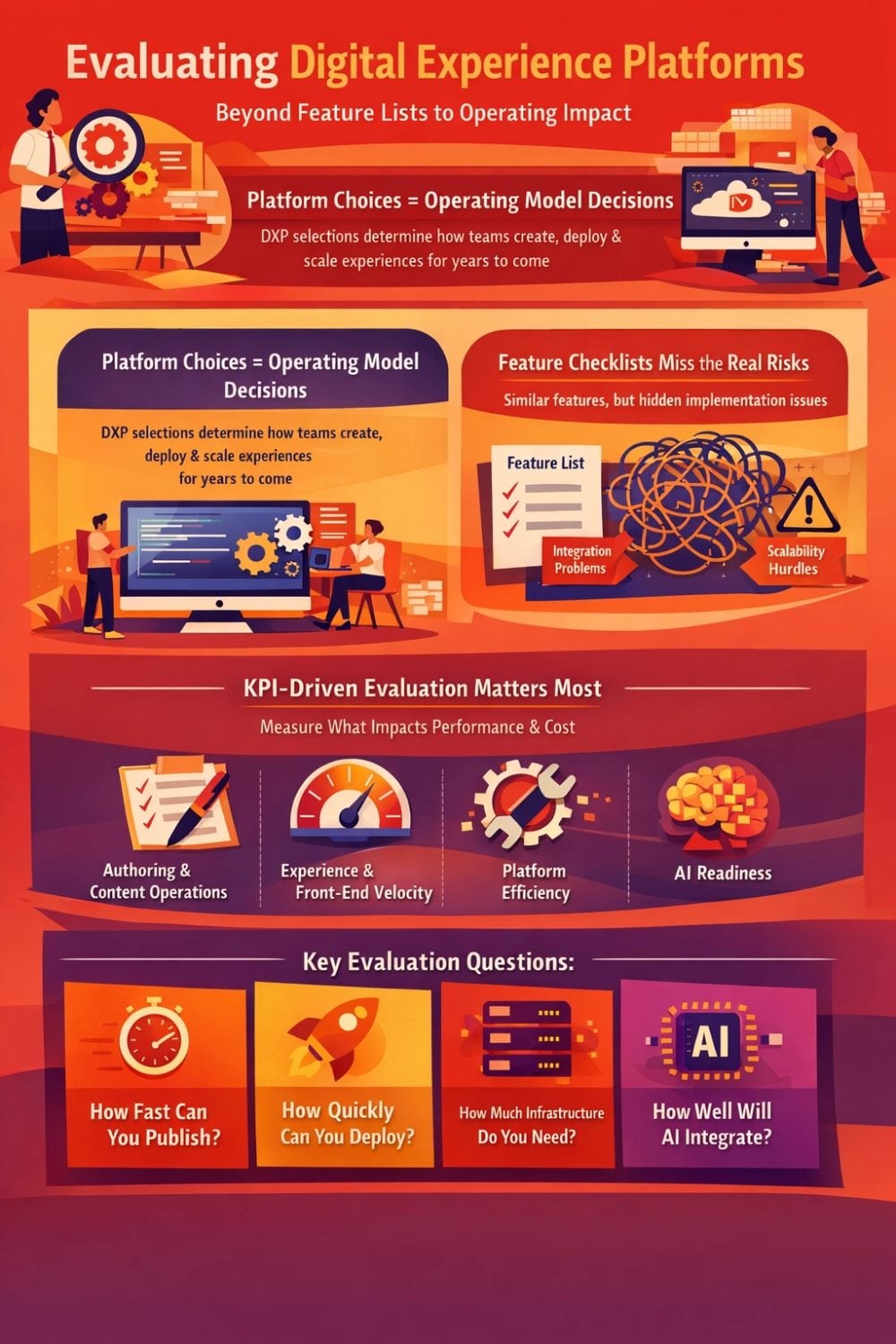

- DXP decisions shape operating models. Platform selections determine how teams create content, release code and scale digital experiences over the next five to seven years.

- Feature checklists miss the real risks. Most modern platforms advertise similar capabilities, but differences in integration depth, governance and workflow design often surface only after implementation.

- KPI-driven evaluation reveals operational impact. Measuring authoring velocity, content operations efficiency, experience performance and front-end release speed exposes how platforms influence long-term productivity and cost.

Editor's note: This is Part 1 of a two-part series examining how enterprises should evaluate digital experience platforms (DXPs). As organizations revisit website and platform architectures in response to SaaS transitions, headless delivery and accelerating AI capabilities, the traditional feature checklist approach is proving insufficient. Part 1 explores why platform decisions are fundamentally operating model decisions and introduces a KPI-driven framework for evaluating authoring experience, content operations, experience performance and front-end velocity. Part 2 will examine operational efficiency, ecosystem depth and AI readiness — and how those factors reshape long-term total cost of ownership.

Across the enterprise market, a renewed wave of DXP and website evaluations is underway. Some organizations are modernizing aging platforms. Others are responding to architectural shifts as vendors move toward SaaS and headless-first models. And increasingly, AI is accelerating the urgency. When platforms introduce new AI layers, data models or delivery patterns, what begins as an upgrade discussion quickly becomes a rebuild conversation.

Once a rebuild is on the table, the competitive field opens up.

Teams that originally planned to stay within their current ecosystem begin looking outside it. Shortlists grow. Demos are scheduled. RFP grids are built. The evaluation process often defaults to what feels measurable and objective: feature comparison.

- Does it support personalization?

- Does it have a DAM?

- Is A/B testing included?

- Can it integrate with our CDP?

On paper, most modern DXPs can check the same boxes.

The problem is rarely feature absence. It is implementation depth, integration cohesion and operating model impact.

Table of Contents

- Frequently Asked Questions About Evaluating Digital Experience Platforms

- From Feature Checklists to KPI-Driven Evaluation

- 1. Authoring Experience & Content Operations

- 2. Experience Delivery & Front-End Velocity

Frequently Asked Questions About Evaluating Digital Experience Platforms

Editor's note: These FAQs accompany Part 1 of CMSWire’s two-part series on evaluating digital experience platforms. This section focuses on why DXP decisions should be assessed through operating model impact, not just feature comparison.

Why Feature Parity Hides Operational Differences

Two platforms may both "support personalization," but one may require significant data mapping and engineering effort to activate it, while another aligns identity, profiles and governance by default. Both may advertise headless delivery, yet one assumes tightly coupled release cycles and the other enables independent front-end deployment. Both may claim AI capabilities, but the depth of workflow integration and governance control can vary dramatically.

The biggest risks in a platform decision are the ones that don't show up in the demo.

Demos highlight what is possible in a controlled environment. They rarely reveal how many teams are involved in publishing a page, how often developers are needed for small changes, how localization scales across regions, how upgrades are handled or how easily new capabilities can be activated without creating integration sprawl.

When evaluations stop at feature parity, organizations miss the operational consequences. The real differences surface after selection, when velocity slows, complexity increases and hidden costs begin to compound.

That is because platform selection is not primarily a feature decision. It is an operating model decision.

Every DXP shapes how your teams create content, release code, manage infrastructure, activate adjacent capabilities and adopt AI. In an environment where architectural change and AI innovation are forcing modernization anyway, the right question is not which platform has the most features.

It is:

How will this platform change the way we operate over the next five to seven years?

Related Article: Digital Experience Platforms (DXPs): Your 2026 Comprehensive Guide

From Feature Checklists to KPI-Driven Evaluation

If platform selection is an operating model decision, then the evaluation framework must reflect that reality.

Most enterprise selection processes rely on weighted feature matrices. Capabilities are scored, gaps are highlighted and vendors are ranked. The result feels objective and defensible. But feature scoring tends to flatten meaningful differences. A simple "yes" does not explain how deeply a capability is integrated, how much effort it takes to activate or what it requires from your teams once it is live.

Two platforms may both receive full marks for personalization, search, AI or headless delivery. Yet the operational effort required to use those capabilities, scale them and govern them may be dramatically different.

Shifting Evaluation Toward Operating KPIs

A more durable approach is to evaluate platforms against the KPIs that shape how your organization actually operates.

Instead of asking whether a feature exists, ask how the platform impacts measurable outcomes such as:

- How long it takes a marketer to create and publish a new page

- How independently front-end teams can release changes

- How much infrastructure your organization must own and maintain

- How easily new capabilities can be activated without creating integration sprawl

- How effectively AI can move from experimentation to governed production

These are not feature questions. They are operating model questions.

In practice, we see five KPI domains consistently determine long-term success:

- Authoring Experience & Content Operations. How efficiently content is created, governed and scaled across regions and brands.

- Experience Delivery & Front-End Velocity. How quickly and reliably digital experiences can be built, optimized and evolved.

- Platform Operational Efficiency (we'll cover 3-5 in the next part of our series). How much effort, cost and risk are required to run and maintain the platform over time.

- Platform Ecosystem & Composable Capabilities. The strength of the surrounding community and how easily adjacent capabilities such as personalization, search and automation can be activated over time.

- AI Operational Readiness. How effectively AI is embedded into workflows and how safely it can operate across systems with governance and control.

Core KPI Domains for Evaluating Digital Experience Platforms

These five KPI domains frame DXP evaluation around operating model impact rather than feature parity, helping teams assess how platforms shape execution over time.

| KPI domain | What it measures | Why it matters |

|---|---|---|

| Authoring Experience & Content Operations | How efficiently content is created, governed and scaled across regions and brands | Reveals whether the platform reduces publishing friction, supports reuse and helps teams scale content operations without multiplying coordination overhead |

| Experience Delivery & Front-End Velocity | How quickly and reliably digital experiences can be built, optimized and evolved | Shows whether teams can sustain strong performance and release changes quickly without introducing architectural drag |

| Platform Operational Efficiency | How much effort, cost and risk are required to run and maintain the platform over time | Determines whether engineering capacity goes toward innovation or is consumed by infrastructure, upgrades and deployment coordination |

| Platform Ecosystem & Composable Capabilities | The strength of the surrounding community and how easily adjacent capabilities such as personalization, search and automation can be activated over time | Indicates how quickly new capabilities can move into production and how much long-term operational responsibility integrations create |

| AI Operational Readiness | How effectively AI is embedded into workflows and how safely it can operate across systems with governance and control | Helps teams assess whether AI will deliver governed operational leverage or remain a shallow productivity layer |

Operating Model Signals Behind Platform Choice

These KPIs are not theoretical. They surface in staffing models, release calendars, budget planning and executive reporting.

When evaluated through this lens, differences between platforms become clearer. Not because one has a feature and another does not, but because each platform steers your organization toward a different operating pattern.

A KPI-driven evaluation shifts the conversation from "What can this platform do?" to "What will this platform require from us?"

That shift is where better platform decisions begin. Let's break down the first two KPI domains:

Related Article: Why Modern Digital Experience Platforms Fail Without Observability

1. Authoring Experience & Content Operations

For most enterprises, the impact of a DXP decision becomes visible in the mechanics of how content moves from idea to publication. The authoring interface is only one layer. Underneath it sits a system of models, workflows, governance rules and integrations that define how work actually flows.

Why Authoring Workflows Define Platform Impact

Evaluating this domain requires more than asking whether a platform has a visual editor or workflow engine. It requires examining how those capabilities change throughput, dependency and coordination over time. Two KPI lenses are particularly useful here: Authoring Velocity and Content Operations Efficiency.

Authoring Velocity

Authoring velocity measures how independently and efficiently teams can create, update and publish experiences. It captures both speed and dependency: how quickly work moves through the system, and how often that work requires developer intervention.

Vendors have invested heavily in improving the day-to-day authoring experience. Visual composition has become a major focus, especially as headless architectures became more common.

Contentstack, for example, moved from side-by-side editing to a full in-context WYSIWYG Visual Builder in less than a year, reflecting demand for true visual authoring in decoupled environments.

Optimizely introduced a new SaaS Visual Builder designed to support headless editing patterns more natively than traditional page editing approaches.

Other vendors continue to refine model-driven editing experiences with configurable component variants, aiming to balance flexibility with governance.

Disconnected Systems Slow Authoring Throughput

However, authoring velocity is rarely constrained by the editor alone. The bigger limiter is friction created by disconnected systems.

Most enterprise teams operate across multiple sources of truth: product catalogs, location data, customer attributes, analytics signals and asset libraries. If authors cannot access that data contextually inside the editing interface, workflows slow down. Teams switch windows, copy identifiers, paste links and manually reconcile information. Those steps compound across hundreds of pages and dozens of authors.

This is where extensibility becomes a primary driver of velocity. Authoring interfaces that can be extended to surface real business data, embed selections and automate repetitive steps remove operational drag.

Historically, Sitecore was known for deep interface extensibility. As the industry shifted toward SaaS delivery models, that extensibility became more constrained across many platforms, including Sitecore's early XM Cloud experience. Sitecore's Marketplace, introduced last year, provides a structured path to bring back extensibility in a SaaS-friendly way, enabling organizations to embed integrations and authoring enhancements without relying on fragile customization patterns.

Measuring the Real Drivers of Authoring Velocity

To evaluate authoring velocity, it helps to measure the operating signals behind the UI. Organizations can track time from draft to publish for standard page types, the percentage of content changes that require engineering support, and the volume of "simple update" tickets that show up in developer backlogs. Teams can also observe how often authors must leave the platform to complete routine tasks such as selecting assets, referencing products or pulling in location information. These metrics provide a clearer picture of whether the platform is reducing dependency or formalizing it.

Related Article: Why AI Alone Can't Help Your Digital Experience Platform Evolve

Operational Signals That Reveal How a DXP Actually Performs

Instead of relying on feature lists, enterprises should track operational metrics that reveal how platforms affect daily workflows.

| Operational Metric | What It Indicates | Typical Risk Signal |

|---|---|---|

| Time from draft to publish | Efficiency of content workflows | Long publishing cycles often signal workflow friction or engineering dependency |

| Developer support for routine updates | Authoring independence | High dependency indicates poor editor capabilities or rigid architecture |

| Approval cycle duration | Governance and workflow maturity | Slow cycles may indicate disconnected planning and publishing systems |

| Content duplication across regions | Reuse and inheritance models | High duplication often signals weak content architecture |

| Global update propagation time | Platform scalability | Manual propagation increases operational overhead as ecosystems grow |

| Front-end release frequency | Developer velocity | Low release cadence often reflects architectural friction or coordination barriers |

The goal is not simply to make authoring feel modern. It is to improve throughput by reducing handoffs, minimizing developer reliance and embedding the systems authors actually depend on directly into their workflow.

Content Operations Efficiency

As digital ecosystems expand across regions, brands and channels, the operational lens shifts from page assembly to lifecycle orchestration.

Content operations efficiency measures how well the broader system supports structured reuse, governance, intake, asset management and propagation at scale. This KPI begins before content ever reaches the CMS. Campaign planning, budget allocation, asset production, review cycles and localization all shape how efficiently digital experiences are delivered.

Content Operations Extend Beyond the CMS

Vendors understand that content operations start upstream.

Optimizely's acquisition of Welcome brought a full-fledged CMP into its ecosystem, formalizing intake, planning and workflow management.

Adobe acquired Workfront to strengthen its marketing work management capabilities across its Experience Cloud.

Sitecore Content Hub has long included CMP and MRM functionality, and Sitecore has been evolving this layer further, embedding AI and agentic workflows directly into campaign planning within SitecoreAI. These investments reflect a shared industry recognition: the CMS alone does not define operational efficiency.

Digital asset management is equally central. Acquia's acquisition of Widen strengthened its DAM capabilities, reinforcing the importance of asset governance, duplication control and rights management within the broader content lifecycle. Asset variation, optimization and distribution increasingly influence publishing speed and brand consistency.

Integration Gaps Create Hidden Coordination Costs

At the same time, gaps between systems often create inefficiencies. Agentic solutions such as Gradial are emerging specifically to address the friction between planning tools, CMS platforms, DAM systems and analytics environments. These tools attempt to reduce coordination overhead by orchestrating work across systems, highlighting how persistent the integration challenge remains.

To evaluate Content Operations Efficiency as a KPI, organizations should move beyond feature presence and examine operational signals. How long does content spend in intake before entering production? What is the average approval cycle time? How frequently are assets duplicated across regions? How often are global updates manually reimplemented locally? What percentage of campaigns require reconciliation between planning tools and publishing systems?

Operational Signals That Reveal Scaling Challenges

Structural indicators provide insight into whether the operating model is absorbing complexity or distributing it. Content duplication rates, volume of localized overrides, number of governance exceptions and the time required to propagate global updates all reveal how well structured reuse is functioning. Approval cycle duration and rework rates indicate whether workflows are embedded meaningfully or operating as parallel processes.

The distinction in this domain is rarely about whether a platform supports workflow, CMP or DAM. Most enterprise ecosystems now do. The meaningful difference lies in alignment: how tightly planning, asset governance and publishing are integrated, how clearly inheritance and propagation are structured, and how sustainable that integration remains within a SaaS delivery model.

Content operations efficiency ultimately determines whether scale reduces marginal effort or multiplies it. The KPI lens reframes evaluation from "Does it include CMP or DAM?" to "How much coordination, duplication and rework does our organization require to operate at scale.

2. Experience Delivery & Front-End Velocity

For most enterprises, the impact of a DXP decision becomes visible in how experiences are delivered and evolved after publication. Performance and release speed are only the surface layer. Underneath sit rendering models, deployment patterns, caching strategies and framework choices that shape how reliably users receive content and how quickly teams can ship change.

Architecture Decisions Shape Performance Outcomes

Evaluating this domain requires more than asking whether a platform supports headless delivery or edge hosting. It requires examining how architectural decisions influence performance consistency, release independence and long-term iteration speed. Two KPI lenses are particularly useful here: Experience Performance and Front-End Velocity.

Experience Performance

Experience performance measures how consistently users receive fast, stable and discoverable experiences, and how much architectural effort is required to sustain that performance.

Headless-first platforms such as Contentful and Contentstack gained early traction largely because they made exceptional performance more achievable. By decoupling the front end and embracing modern rendering strategies, they enabled teams to optimize aggressively for speed, SEO and global delivery. That shift created pressure across the market.

Sitecore introduced JSS to support decoupled development and ultimately committed its future architecture to a headless-only model in SitecoreAI.

Optimizely's SaaS offering similarly supports headless delivery by default. Even Adobe now supports headless patterns, though many implementations across both Adobe and Optimizely remain traditionally coupled.

Modern delivery models combine static generation, incremental regeneration and server-side rendering within the same application. Edge execution has evolved beyond simple CDN caching into distributed logic that preserves personalization while reducing latency. Image optimization and structured metadata are often handled automatically within modern frameworks.

These advances are not theoretical. They are responses to measurable business pressure: Core Web Vitals influence search visibility, performance affects conversion rates, and global latency impacts user engagement.

Related Article: AI Entered the Digital Experience Stack in 2025. Reality Followed.

Performance Metrics That Matter in Production

Evaluating this KPI requires examining operational metrics rather than feature claims. Organizations should assess sustained Core Web Vitals performance across regions, performance variance between personalized and non-personalized experiences, rebuild frequency and cache invalidation complexity, and the time required to publish performance-sensitive updates. Accessibility compliance rates and structured data consistency also provide signals about architectural discipline.

Traditional server-rendered implementations often depended heavily on CDN caching to offset backend latency. That approach delivered speed for static pages but created tension when personalization and dynamic content were introduced. Modern headless and hybrid rendering strategies reduce that tradeoff, though they introduce new operational responsibilities around revalidation and freshness.

Most enterprise digital experience platforms can achieve strong performance outcomes. The meaningful distinction lies in how much architectural coordination, DevOps maturity and ongoing tuning are required to maintain them over time. The KPI lens shifts evaluation from "Can it be fast?" to "How sustainably can we keep it fast as complexity grows?"

Front-End Velocity

Performance alone does not determine competitive advantage. Enterprises must also evolve experiences continuously, without introducing release friction or cross-team bottlenecks.

Release Independence Drives Competitive Advantage

Front-End Velocity measures how quickly teams can design, build and release changes once a platform is live. It reflects framework alignment, deployment independence and developer efficiency over time.

Why Headless Architectures Accelerated Performance Innovation

The rise of headless-first platforms such as Contentful and Contentstack accelerated adoption of modern React-based architectures. By decoupling the front end from the CMS, they enabled teams to leverage an ecosystem that evolves rapidly, with strong library support, modern rendering patterns and broad developer availability. React's pace of innovation has significantly outstripped traditional Java- and .NET-based coupled CMS architectures, especially in areas such as hybrid rendering, streaming and developer tooling.

In response, enterprise vendors across the market have aligned more closely with React and Next.js. Headless delivery models, starter kits and official integrations are now common. Optimizely's SaaS offering supports headless delivery by design. Sitecore's current strategic direction is fully headless. Adobe and others provide headless options alongside traditional coupled implementations.

However, it is critical to evaluate this KPI within a consistent deployment model. If an organization chooses a headless architecture to benefit from front-end velocity, it must also assess the authoring experience in that same headless model. Some platforms support modern front-end frameworks but sacrifice in-context editing depth when decoupled. Evaluating the velocity benefits of headless while assuming the editing comfort of a coupled CMS leads to unrealistic expectations.

How to Measure Front-End Velocity in Practice

Front-End Velocity should be measured operationally. Release frequency for front-end changes, time from approved design to production, rollback complexity and the percentage of front-end updates that require backend coordination all provide concrete indicators. Talent availability and ramp-up time for new developers also signal ecosystem alignment.

Design system maturity plays a role as well. When design tokens and atomic components map cleanly to reusable front-end libraries, iteration speeds increase and QA overhead declines. When design intent and implementation drift apart, velocity erodes regardless of framework choice.

Nearly every enterprise DXP now advertises headless support. The meaningful difference lies in how naturally the platform aligns with modern front-end ecosystems and whether that alignment holds up under real-world authoring and deployment constraints.

The KPI question remains practical: under the deployment model we will actually use, does this platform increase our release cadence over time, or does architectural friction slow us as complexity grows?

Editor’s Note: Part 2 of this two-part series on evaluating digital experience platforms will examine the operational realities that determine long-term success — including infrastructure ownership, deployment efficiency, ecosystem depth, community strength and AI operational readiness — before reframing total cost of ownership through the lens of how platforms shape enterprise execution over time.

Learn how you can join our contributor community.