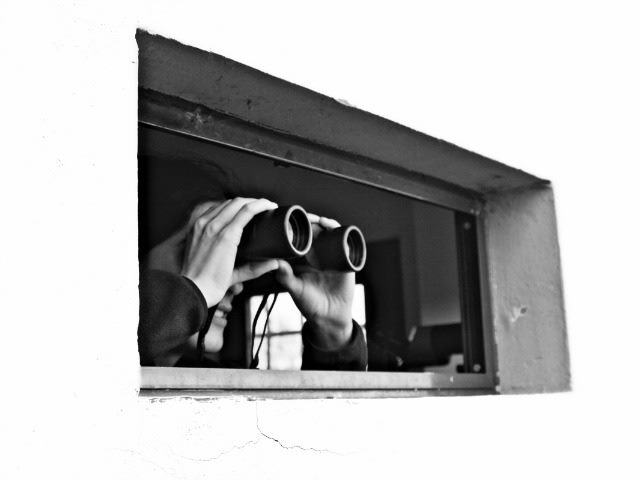

Data breaches, drones, NSA surveillance — as much as these issues have captured the minds of consumers, pundits and government agencies, we have not yet seen the real privacy problems that will soon face us. What happens when devices become proxies for public resources? With the growing asymmetry between those who are gathering and those who are the subjects, it will be critical to create new frameworks for accountability to ensure that privacy and big data can coexist in the future.

To create a little context that will frame this discussion, for many years the traditional privacy regime governing how we collect and protect personal information has been based on privacy principles outlined in the FTC’s Fair Information Practice Principles (FIPPs) and a set of eight fair information practices codified in the OECD Guidelines on the Protection of Privacy and Transborder Flows of Personal Data. These require that data be collected only when needed and collected accurately – for a particular purpose, with limited use, kept securely, with openness and transparency, and with the right of individual subjects to review their data and complete accountability of the data collector to the individual.

However, many of these principles are tremendously difficult if not impossible in the world of big data. Big data is generally characterized by “velocity,” “variety” and “volume” of such magnitude that it may be combined to create information that was previously not available. I’ve read many interesting definitions of big data, but two powerful ones raised by Gil Press at Forbes are:

“The belief that the more data you have the more insights and answers will rise automatically from the pool of ones and zeros.”

“A new attitude by businesses, non-profits, government agencies, and individuals that combining data from multiple sources could lead to better decisions.”

Predictive Privacy Harms

With the increased demand for big data in modern business, we need to consider the ideas of consent and choice even when examining concepts like predictive privacy harms — situations where companies may not have collected any personally identifiable information (PII), but have gathered low-level information such as a “Like” on Facebook or a one-time purchase.

So why does this low-level information matter in terms of privacy? To a certain extent, when customers shop on the Internet, they are already used to targeted advertising. Shopping websites like Amazon track progress through their sites and capture shopping habits, allowing them to suggest items that may be of interest. Consider how this compares to in-store tracking and what department stores already do today – keeping track of frequent purchases to allow them to send coupons or promotional information to entice you back into the store.

Here is where I make the distinction and draw the proverbial line in the sand. If I walk into a store and I want to identify myself, it is my choice. Part of the experience of shopping is the ability to walk around unknown and anonymously, slipping in and out of aisles and browsing around where nobody knows my name. If I want or need help and attention, I can ask for it. Online or in-person, I believe that individuals should have the right to ask for help if they need it, and remain undisturbed if they don’t — to share their information if they want to and to choose the purpose for which they are providing it.

Legislation or regulation alone may not be the answer to this problem. Rather, the key lies in a combination of regulation — from both the government and through the organizations collecting this data — and the power of consumers themselves.

Creating Frameworks

I’d like to suggest that organizations and government agencies can do more to help protect customers by building a framework based on the FIPPs principles, where data itself is tagged with privacy preferences as it flows between systems — allowing consent to be applied. If we understand what the data is and how it was collected (through a UI or even an Internet of Things device), we can further determine whether or not the subject of the data (employee, customer or partner) may establish their preferences at the collection point.

For example, if a business is collecting information from a customer through a wearable fitness device, then the customer — upon registration of the device — can indicate whether or not the company may use such personal data to provide individualized goals, incentives and information that compares them with other people in their age group. The data collected from that person could be further tagged with privacy preferences so that the company could potentially use that data in the future for health research or to share with third parties. In this way, even if the purpose of the data collection changes over time, the profiles and preferences could be examined and updated.

The information that companies hold about their employees, customers and partners — as well as other sensitive information — is increasing exponentially over time. This rapid acceleration of data collection and manipulation holds the promise of tremendous competitive advantage for those that can effectively use it. However, in order for effective data management and collaboration to turn into a competitive advantage for the business, timely access to data and multi-directional communication flow — with the right risk management filters in place — are essential to ensure that data is available to those who need it, and kept from those who shouldn’t have access.

Companies can repurpose their compliance programs, which have traditionally been viewed as a cost center, and turn this previously untapped information into a business asset. This not only creates a quantifiable return on investment for data security and privacy programs, but also helps the company increase productivity and stay out of regulatory hot water.

Learn how you can join our contributor community.