Chatbots have become what apps once were: shiny new must-have items that retailers and businesses are lining up to deploy.

But when you start using any new media, be it an app, a website or a chatbot, you have to monitor its performance to make sure it’s effective.

Do Chatbots Really Help Customers?

For years, marketers have had analytics to monitor the effectiveness of websites, and businesses later adopted the same approach for apps. But what kind of metrics would work for chatbots? How can marketers tell if a bot is really helping customers consistently?

A few analytics tools for chatbots are starting to emerge. But the most noteworthy one this year comes from Google. In November, the company that led the analytics charge years ago with Google Analytics launched a dedicated chatbot analytics platform called Chatbase.

The debut of Google Chatbase was relatively quiet compared to the Google Analytics introduction — Chatbase was in beta to developers until shortly before its launch to the public. But the tool’s existence shifts analysis to another level, mainly due to the basic artificial intelligence (AI) behind chatbot design.

With other analytics tools, analysts usually review metrics that represent activity on a website or app layout — arrival to a certain page or a click of a button, for example. The analysis is conducted against business objectives.

Measuring Response Dynamics

But with chatbots, analysts are gaining data from AI-driven responses to user requests. That differs from a static webpage or action generated from a page element. Chatbot responses are more varied than text on a webpage. Thus the type of causal metrics used in a web analytics system could overlook key aspects of user interactions with chatbots.

The various response iterations also can introduce statistical quality concerns, such as bias. Bias can tilt an experience to unexpected, sometimes negative, outcomes.

Thus chatbot analysis, while still conducted against business objectives, shifts toward a greater emphasis on dynamics — what kinds of requests are made, how frequently certain requests appear, and what requests are difficult for the chatbot algorithm to process.

Google Chatbase comes with a REST API that can be installed on textbots and voicebots on almost any messaging platform. That means Chatbase can be used with popular messaging apps such as Facebook Messenger, Kik, Slack, Allo and Skype.

A Look Under the Hood

To make installation a bit easier for developers, Google provides a basic kit chatbot kit that integrates with the Chatbase REST API. The kit uses Node.js and a Microsoft bot, so developers can see how the REST API can be installed. I also found a npm package, which can be helpful for chatbots that use programming languages that include node.js or npm in their application tech stack. In addition, Chatbase is included with Dialogflow, a voice and text-based conversational interface platform from Google.

Once Chatbase is installed, users can look at three sets of report panels to review chatbot performance. The dashboard is similar to those of Google Analytics and Data Studio, a reflection of the influence of Google’s effort to standardize the look of its products’ user interfaces through the Material Design initiative.

At the top of the dashboard is a timeline for active users, very similar to most summary dashboards seen for websites, apps and social media platforms. It displays user growth over time, with trendlines for monthly, weekly and daily periods. The metrics can be compared across chat platforms, highlighting the platforms that are underperforming.

3 Types of Reports

There are panel displays called Sessions, Messages and Transcripts, each with specific overview of a chatbot response.

The Sessions report shows four metrics: daily sessions, daily sessions per user, sessions per user and user messages per session. The purpose of this panel is to note engagement activity. Each metric appears as a percentage change and volume number, with a trendline underneath the number.

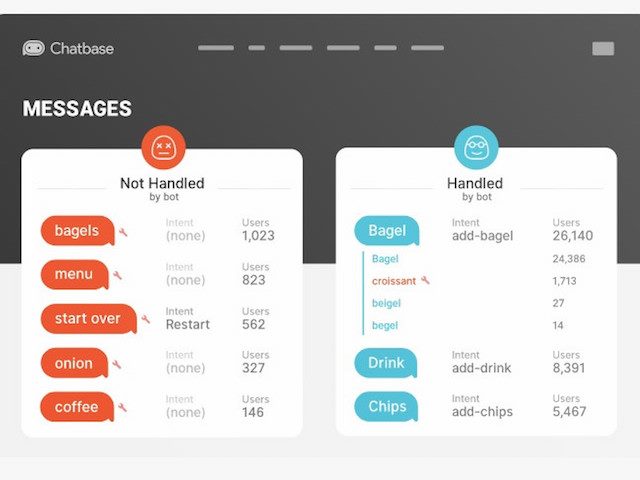

The Messages report is designed to identify opportunities to improve response efficiency. It displays two columns: “handled” user queries (those in which the bot took an action as a response) and “not handled” queries (those in which phrases or responses received no action). Metrics also include the percentage of users who were present at the time of a given request, and agent response time, representing how long it took the bot to respond.

The Transcripts report looks similar to the Messages Report, but its purpose differs slightly. It highlights repeated requests in the request history. This lets analysts drill down to words that were misinterpreted and how the bot responded, saving development time in pinpointing whether a bot encounters a mispronunciation or is truly not able to deliver a request.

Assessing, and Improving, Performance

Businesses are increasingly using messenger platforms as conversation interfaces to customers, so the value of chatbots analytics tools is likely to rise. Platforms that compete with Google Chatbase include Dashbot and Botlytics.

Chatbots help brands maintain a high-quality omnichannel presence by guiding customers to the right information. Bot analytics platforms like Google Chatbase give marketers information they need to improve the customer experience by improving chatbot performance.

Learn how you can join our contributor community.