The Gist

- The vendor noise is deafening. With 15,384 commercial martech tools in the 2025 landscape and AI-powered pitches arriving daily, the biggest risk for marketing teams is buying more capability before using what they already own.

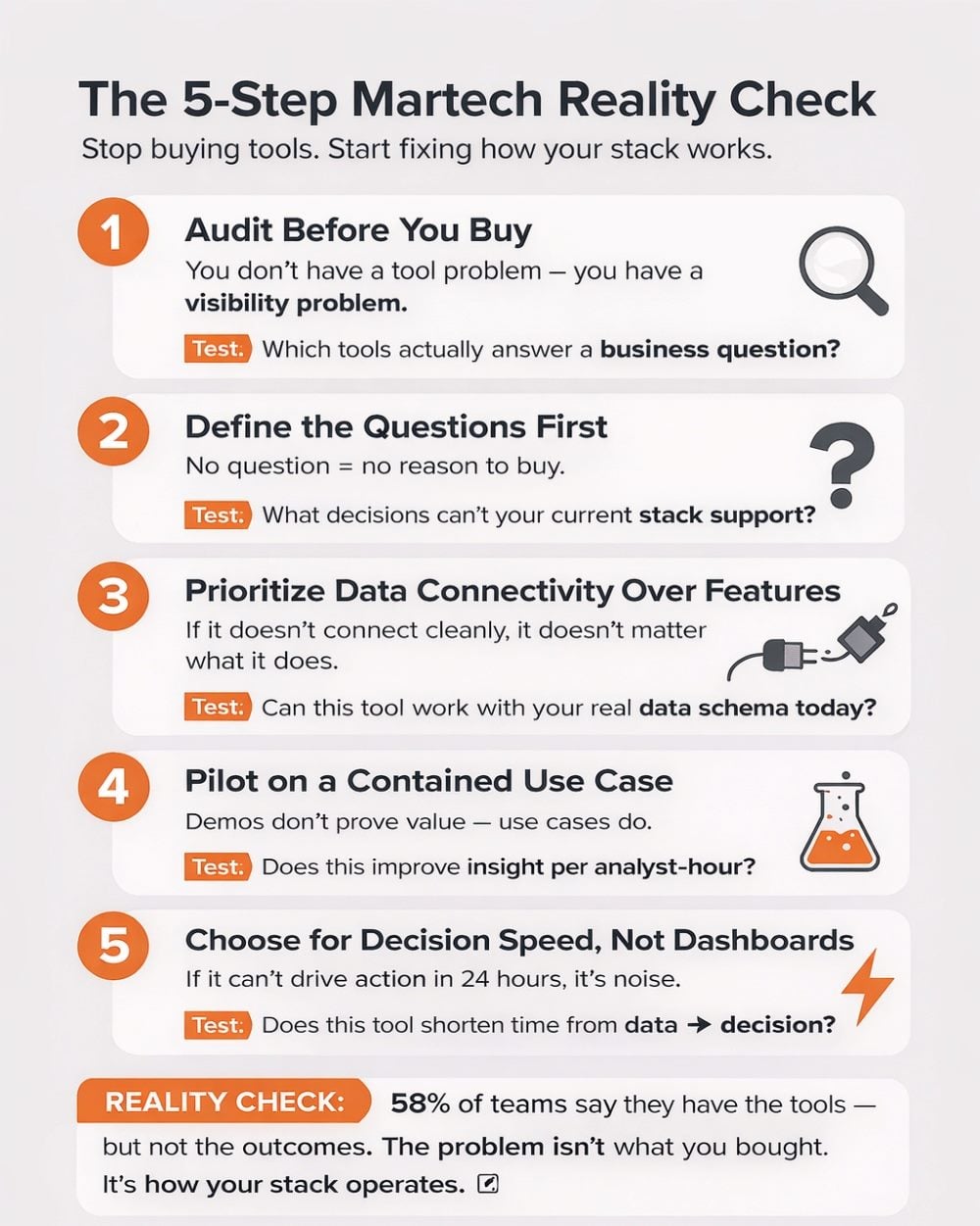

- Analytics selection needs a process, not a demo. A structured five-step framework — auditing current tools, defining analytical questions, testing data connectivity, piloting on contained use cases, and validating decision speed — produces better outcomes than feature comparison alone.

- Agentic AI can enhance custom analytics. For teams willing to think beyond prepackaged dashboards, Agentic AI solutions like Claude Code can provide automation services for data queries, testing, and reporting pipelines—organizing the stack further.

Every few months, a new wave of marketing technology advice arrives — from vendor webinars, analyst newsletters, conference keynotes and the kind of LinkedIn post that generates ten thousand reactions before lunch. Each wave carries a version of the same message: the right martech tool will solve your problem.

The martech landscape is now a huge software universe, with each tool acting as its own star or planet. That universe has grown to 15,384 commercial solutions in 2025, according to Scott Brinker's annual Martech landscape overview. Navigating that universe without a map is how marketing teams accumulate tools they don't use. The Brinker figure also implies that marketing leaders face a difficult information environment— a ton of choices and advice that can hamper making quick rational decisions.

To navigate through this endless universe, marketers do have a starting point: a five-step question framework for selecting analytics-focused Martech tools. Each step builds on the previous one, moving from an honest assessment of current capabilities to a structured evaluation process. I explain what the steps are that minimize vendor noise and identify what makes a good choice of upgrade or new solution purchase.

Along the way, I examine where agentic AI marketing solutions could be deployed as an option.

Table of Contents

- Why Martech Advice Tilts Selections in 1 Direction

- Lots of Tools, Little Customer Understanding

- Why Analytics Is the Right Lens for Martech Decisions

- The 5-Step Analytics Stack Selection Framework

- What to Expect Next: Equipping Your Marketing Team for Smarter Selection

Why Martech Advice Tilts Selections in 1 Direction

The information marketers receive about tools is structurally biased toward acquisition. Vendor content, analyst research sponsored by platform providers and influencer-adjacent thought leadership all share a common incentive: more tools under evaluation means more engagement, more deals, more commissions. The advice may still be factual, but marketers must frame those ideas systematically to extract value.

Achieving value has become harder as some solutions marketers have become underutilized. Gartner's 2025 survey of 413 marketing technology leaders, for example, found that marketers now use approximately 33% of their stack's purchased capabilities — down from 42% in 2022 and 58% in 2020. Martech's share of the total marketing budget fell from 30% in 2023 to 22% in 2025, reflecting growing leadership skepticism about stack investment.

Recent data reinforces just how structural this problem has become. The latest CMSWire State of Digital Customer Experience report shows that while martech adoption is widespread — with 65% using CRM systems, 63% email marketing tools and roughly half using CDPs, DXPs and marketing automation — the operational challenges are not about missing tools. They are about making them work together.

In fact, 28% of organizations cite siloed systems, integration challenges and fragmented customer data as a top barrier, while 26% point to outdated technology or processes and another 26% cite lack of cross-department alignment. Budget pressure leads the list at 31%, further limiting teams’ ability to fix foundational issues rather than layer on new solutions.

Lots of Tools, Little Customer Understanding

The disconnect becomes even clearer in how teams use what they already have. The report finds that 58% of organizations say tools are in place, but the actions taken from those insights have been limited or have not produced a strong understanding of customers. Nearly half (49%) say they only “moderately” understand their customers despite significant investment in analytics, CDPs and experience platforms.

This reinforces a consistent pattern across the martech ecosystem: the constraint is not tool availability. It is integration, operational alignment and the ability to translate data into action. In that context, adding new tools — even AI-powered ones — often compounds complexity rather than resolving it.

The pace of the landscape compounds the problem. Vendors know that AI is the dominant evaluation lens right now, and nearly every platform has rebranded some portion of its product as an AI feature. The challenge for marketing leaders is distinguishing between AI that genuinely improves analytical output and AI that decorates an interface. Gartner found that 45% of martech leaders report their vendor-deployed AI agents are failing to meet performance expectations — not because the AI is bad, but because the underlying data and stack infrastructure it requires were not ready before the purchase decision was made.

Related Article: 6 Marketing Technology Trends to Watch in 2026

Why Analytics Is the Right Lens for Martech Decisions

Among the functional categories inside a martech stack, analytics is the one that often exposes how the rest of the stack is working. When data integration breaks down, dashboards reveal how customer journeys are poorly orchestrated. Analytics serves as the accountability layer of a martech stack.

That accountability function is why the market for analytics tooling is reshaping more dramatically than adjacent categories. In a report from eMarketer, 49% of marketers worldwide now use marketing mix modeling (MMM), and 56% of US ad buyers plan to increase reliance on MMM — a reflection of the shift toward models that quantify actual business outcome drivers rather than proxy metrics.

At the same time, the composable data warehouse architecture is changing how analytics tools relate to the rest of the stack. Platforms that once required data to be copied into their proprietary storage are now under pressure from tools that query customer data directly from a cloud warehouse, reducing duplication and improving the governance of first-party data flows.

The implication for marketers selecting an analytics tool is that the evaluation cannot be conducted in isolation. A dashboard platform that produces beautiful visualizations but cannot join cleanly to your CRM event stream or warehouse identity layer will create the same integration overhead that caused the previous tool to be abandoned. The analytics tool selection is, in practice, an architecture decision — and that means it requires a structured process rather than a feature-by-feature comparison with a preferred vendor's slide deck.

AI Is Changing What 'Analytics Tool' Even Means

The emergence of agentic AI tools like Claude Code adds a further dimension to this decision. Claude Code has become popular among developers and program-savvy data analytics teams learning to leverage AI capabilities in their stack. Claude Code connects to marketing platforms via APIs, writes and runs the scripts that extract and transform data and can build internal tools tailored to specific analytical questions. For teams with access to first-party data and a basic understanding of their data schemas, this creates a genuine alternative to purchasing a prepackaged marketing analytics platform for certain classes of analytical work.

For example, Firecrawl's marketing team reports using Claude Code to build fully custom analytics reporting pipelines — pulling from Google Analytics 4, monitoring brand mentions and generating weekly performance summaries — replacing workflows that previously required separate SaaS subscriptions.

Other marketing execs and professionals believe analytics is a starting point for reviewing the martech stack.

Cal Singh, head of marketing and partnerships at Equipment Leasing Canada, noted in his experience restructuring marketing stacks how backwards architecture can get.

"Most of the decisions that are made in martech are made backwards, " Singh explains. "Someone sees a new tool, becomes excited and constructs a rationale for its inclusion in retrospect. The stack gets heavier, integrations get in messier and three months later no one is sure of explaining what is actually driving results." Singh believes that teams must ensure "that the infrastructure behind the analytics is trustworthy."

The 5-Step Analytics Stack Selection Framework

The five steps below are designed to be run in sequence before any new analytics tool enters a formal evaluation of martech stack changes. These steps reorient the selection decision around the work the analytics function actually needs to do, the data it already has available and the specific places where a new tool would produce a measurable improvement over the current state. Each step also identifies where agentic AI can also accelerate or extend the work, for teams that want to close capability gaps without expanding their vendor roster.

Let’s look at the steps more closely, followed by a summary table

Step 1: Audit Before You Buy

This first step sounds basic, but you would be surprised how often it is skipped. A useful audit inventories every analytics-adjacent tool in the stack — including tools in the CRM, customer data platform (CDP), ad platforms and customer success software that produce reporting outputs — and maps each to a specific business question it answers. Any tool that cannot be mapped to a question with a named decision-maker gets flagged for potential elimination rather than replacement.

Step 2: Define the Analytical Questions First

This step is the most influential to final decisions. Before opening a single vendor proposal, the analytics team should produce a ranked list of the business questions that current tools cannot answer or answer poorly. Those questions become the evaluation criteria. A tool that addresses the top three questions on the list with clean, governed data gets evaluated seriously; a tool that does not address those questions, regardless of its feature set or analyst ranking, does not advance. Many marketing teams often coalesce around the highest-priority unanswered questions, coordinating their decisions as a result.

Step 3: Prioritize Evaluating Data Connectivity Over Solution Features

Teams often use feature evaluation to make vendor comparisons, and it is also the form most susceptible to vendor influence. You don’t want to pick a solution expecting a feature that is not immediately available. A feature that exists in a product roadmap or as a beta capability can appear on a comparison matrix the same way as an in-production feature, yet it could be delayed — indefinitely. A feature on a vendor roadmap carries no delivery guarantee, and procurement decisions built on unshipped capabilities frequently produce technical debt.

Starting with data connectivity can provide more feedback on how your martech stack works, with features that are immediately available. Before any tool advances in the selection process, the evaluation team should see how a solution works with a data schema sample and ask the vendor to demonstrate the integration work.

Step 4: Pilot on a Contained Use Case

A proof-of-concept on a single, well-defined analytical use case produces more actionable insights than any amount of reference checking or analyst briefing. The use case should have a defined trial period and success metric. The outcome should yield clear deltas for any improvement claimed. Ultimately teams should ask themselves, “Does the candidate tool produce more actionable insight per analyst-hour than the current approach?”

Step 5: Choose for an Active Analyst Culture

The final step is a culture check as much as a technical one. The right question at this stage is not "does the demo look impressive?" but "can the analyst act on this output within 24 hours?" If the answer is no, the tool is producing reporting rather than analysis. This is also essential for agentic AI solutions. Making teams comfortable to use and speak on the results of the solution provides a path for lightweight internal tools — custom anomaly detectors, automated attribution models, real-time spend alerts — to be purpose-built for the analysts who use them.

Selecting Your Analytics Stack for Marketing Technology

Condensing our five-step guide for analytics in marketing technology. Together the five steps raises a strategic question —“What does this tool do that our current environment cannot?" Running that question rigorously through each step surfaces the tools that genuinely extend analytical capability — and identify the underutilized ones. The agentic AI column is not a suggestion to build everything with AI automatically. It just shows what potential activities may be a fit.

| Step | Focus | Analytics Lens | Potential Agentic AI Fit |

|---|---|---|---|

| 1. Audit Before You Buy | Map current tool inventory against measurable business outcomes | Identify which tools produce no unique data, duplicate outputs, or block data integration | Query your existing GA4, Looker, or warehouse data to surface utilization gaps and redundant event streams before the first vendor call |

| 2. Define the Analytical Questions First | Establish the specific business questions analytics must answer — before evaluating platforms | Prioritize measurement of customer acquisition cost, conversion attribution, and retention by cohort | Generate exploratory analysis scripts against first-party data to clarify which questions current tools already answer — closing speculation before it drives purchases |

| 3. Prioritize Evaluate Data Connectivity, Over Solution Feature | Score candidates by how cleanly they exchange data with your existing warehouse and identity layer | Test vendor APIs against real data schemas; prioritize tools with documented event taxonomies | Write and run API test scripts that pull sample data from vendor sandboxes into your existing analytics environment, confirming integration before contract |

| 4. Pilot on a Contained Use Case | Run a 60-day proof-of-concept on one analytics use case with defined success metrics before broader deployment | Measure signal-to-noise ratio: does the tool generate more insight per hour of analyst time than the incumbent? | Automate pilot reporting pipelines — pulling, cleaning, and summarizing data from the candidate tool alongside legacy outputs — making the comparison objective and reproducible |

| 5. Choose For An Active Analyst Culture | Select tools that surface decision-ready outputs for the people running campaigns, not dashboards designed to impress in QBRs | Validate that outputs can be acted on within 24 hours: does the tool reduce time from data to decision? | Build lightweight internal tools — alert systems, anomaly detectors, custom attribution models — that extend the analytics stack without a new vendor license |

What to Expect Next: Equipping Your Marketing Team for Smarter Selection

Marketing teams that work through these five steps will arrive at vendor evaluations with significantly more leverage than those that enter through a demo request. They will know which analytical questions are genuinely unanswered, which data flows are structurally broken and which capability gaps are large enough to justify a new vendor relationship versus addressable through better use of existing tools or an agentic AI workflow. That knowledge alters an evaluation team's planning conversations—- instead of being guided through a vendor's preferred narrative, the evaluation team can direct the conversation toward the specific integration tests and use-case pilots that actually predict deployment success.

They can start to experiment to run the audit step on the current analytics layer alone — not the entire stack — before the next renewal cycle. Identify which tools in the analytics inventory are producing outputs that drive weekly decisions, which are producing outputs that appear in quarterly presentations and nowhere else and which are collecting data that no dashboard references. That audit alone will surface consolidation opportunities that reduce cost and complexity simultaneously.

The broader lesson is that marketing technology is an adoption and governance problem that selection processes have been solving with the wrong tool — more evaluation, more procurement, more contracts. A framework that starts with analytical questions, tests connectivity before commitment and measures decision speed as the primary success criterion does not just produce better tool choices. It builds the organizational habit of treating the analytics stack as a working instrument rather than a trophy case assembled from impressive vendor claims.

Learn how you can join our contributor community.