The Gist

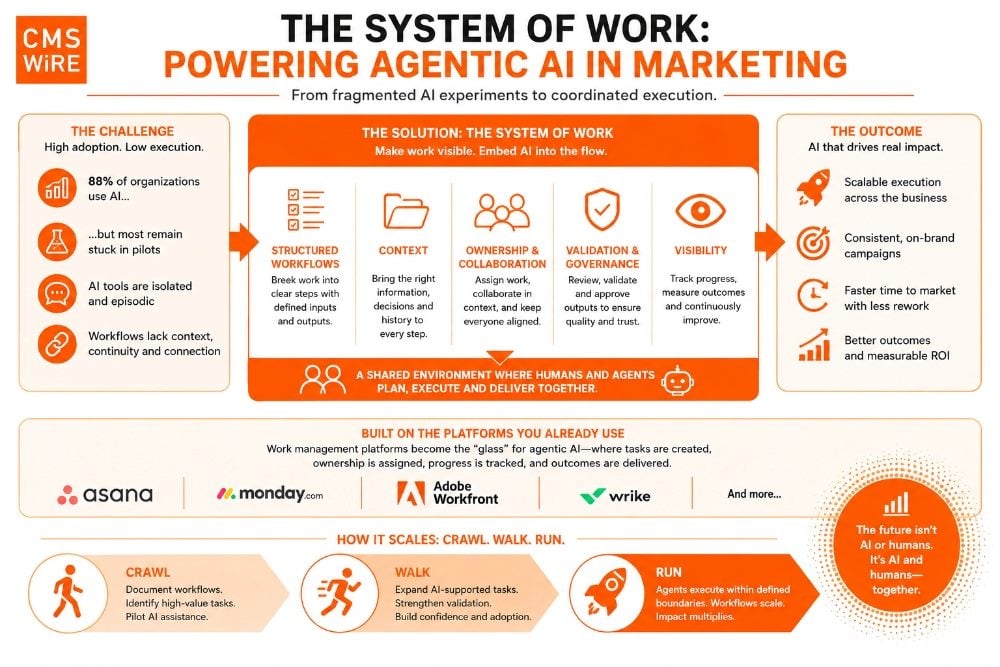

- AI adoption is widespread, but execution is fragmented. Most organizations now use AI somewhere in the business, yet many marketing teams remain stuck in disconnected pilots, isolated copilots and inconsistent workflows.

- Workflow clarity matters more than adding another AI tool. The article argues that organizations need to map and structure how work actually happens before agentic AI can scale reliably.

- Work management platforms may become the operational “glass” for AI. Systems like Asana, Monday.com and Workfront could evolve into the shared coordination layer where humans and AI agents collaborate on tasks together.

It’s hard to argue that AI hasn’t arrived.

Adoption has reached near ubiquity. McKinsey & Company’s 2025 State of AI report found that 88% of organizations now use AI in at least one business function, up significantly year over year.

But what’s more telling is what comes next. Despite that widespread adoption, nearly two-thirds of organizations are still stuck in pilots and haven’t scaled AI across the business.

On the surface, it looks like success.

But talk to marketing teams, content teams, or digital experience teams, and a different story starts to emerge.

Yes, people are using AI. But it’s uneven. It’s inconsistent. It’s often invisible. And in many cases, it quietly stalls after the initial excitement wears off.

AI Adoption vs. AI Execution Reality

AI is everywhere in theory, but far less consistent in practice across marketing workflows.

| What Looks Like Progress | What’s Actually Happening | Impact on Teams |

|---|---|---|

| 88% of organizations using AI | Most stuck in pilot mode | No scaled operational impact |

| AI features embedded in tools | Disconnected, one-off usage | Fragmented workflows |

| Copilot-style interfaces | Episodic, context resets each use | Lost continuity across campaigns |

| Fast content generation | No link to execution systems | Manual handoffs and rework |

Part of the issue is how AI is being introduced into the tools marketers already use.

Many platforms have rushed to add AI features: buttons to “generate copy,” “rewrite content,” or “summarize this page.” These are helpful in the moment, but they tend to be isolated. They don’t carry context from one step of a campaign to the next. They don’t understand the broader objective. And they rarely connect to the downstream work required to actually execute.

Table of Contents

- AI in Marketing FAQ

- Why Copilot Experiences Often Stall

- Rethinking the Work Before Rethinking the Tools

- Why Context Becomes the Real Infrastructure

- From Tasks to Participation: Where Agents Fit in the Workflow

- The System of Work: A Natural Home for Humans and Agents

- The Reality Behind the Glass: Why Agentic Execution Is Still Complex

- Composable Architecture Will Define Scalable Agentic AI

- Where to Start: From Understanding to Execution

AI in Marketing FAQ

Editor’s note: Key questions surrounding AI in marketing workflows, agentic execution and the emerging “system of work” model.

Why Copilot Experiences Often Stall

Alongside those features, we’ve seen the rise of copilot-style chat interfaces embedded across applications.

AI Without Workflow Memory

These experiences are flexible, but they are also inherently episodic. Each interaction starts fresh. Context is limited to what’s manually provided. Outputs live outside of structured workflows, often copied and pasted into documents, tickets, or content management systems. Over time, that disconnect becomes more pronounced. The AI may be smart, but it isn’t aware of the work.

At the same time, some of the more powerful capabilities are exposed in ways that aren’t designed for everyday business users. They require setup, configuration, or ongoing management that doesn’t align with how marketing teams operate. Even when these approaches unlock real value, they don’t scale across an organization.

The result is a fragmented experience.

The Difference Between Assistance and Execution

AI is present but not coordinated. It’s helpful, but not dependable. It accelerates individual tasks but doesn’t move the workflow forward in a consistent way.

And that’s where the momentum starts to fade.

For AI to truly take hold in marketing, it needs to be embedded into the flow of work itself. That means breaking work down into manageable, well-defined steps. It means having clear points where outputs can be assigned, reviewed and validated. And it means creating a shared surface where both humans and machines participate in the same process.

In other words, the “glass” for agentic AI can’t be another tool layered on top. It has to be the same systems we already use to manage work, where tasks are created, ownership is defined, progress is tracked, and outcomes are delivered, regardless of whether the work is being done by a person or an agent.

Rethinking the Work Before Rethinking the Tools

Before thinking about how AI fits into marketing workflows, it’s worth taking a step back and looking at how those workflows actually function today.

Because in many cases, they’re not as clearly defined as we might assume.

Marketing work is inherently complex, but it’s often managed informally. Campaigns move forward through a combination of briefs, meetings, messages, and shared understanding. Teams know what needs to happen, but that knowledge is distributed. Some of it lives in documents. Some of it lives in tools. A lot of it lives in people’s heads.

As teams scale, timelines compress, and expectations increase, the gaps start to show. Steps get skipped. Context gets lost. Content is created without full alignment to the original objective. Reviews happen late or not at all. And the result is often rework, inconsistency, or missed opportunities.

At its core, though, the work follows a pattern.

Campaigns aren’t a single action. They’re a series of interconnected steps. A brief is created. Content is drafted. Assets are produced. Pages are assembled. Reviews happen. Approvals are given. Changes are made. Eventually, something is published.

Even if that process isn’t formally documented, it exists. The first step in improving it is making it visible. That means breaking work down into its component parts and understanding what each step actually requires.

What information is needed to start the work? Who is involved? What decisions need to be made? What does a completed step look like? What happens next?

Related Article: How AI Rewrote the CMO's Job: An Inside Look

Why Context Becomes the Real Infrastructure

This is where context becomes critical. Each step depends on more than just a task description. It depends on the broader campaign objective, the audience, the messaging, the constraints and the decisions that have already been made. Without that context, even well-executed work can miss the mark.

It also means recognizing that collaboration isn’t incidental, it’s fundamental.

Work doesn’t move forward on its own. It moves through people. Through handoffs, feedback and validation. Through moments where something is reviewed, challenged, refined and approved.

Those moments are often where quality is determined.

But they’re also where inconsistency creeps in when the process isn’t clearly defined.

Workflow Visibility Creates Operational Consistency

When you start to map this out, something important becomes clear. Marketing workflows are already structured. They’re just not always structured explicitly.

And until they are, it’s difficult to create consistency. Difficult to scale. Difficult to introduce anything new, whether that’s a new tool, a new process, or a new way of working.

Understanding the process, defining the context and making collaboration visible isn’t just an exercise in documentation. It’s the foundation for rethinking how work gets done.

From Tasks to Participation: Where Agents Fit in the Workflow

What Makes a Workflow AI-Ready

Before agents can participate, workflows need to be explicit, structured and repeatable.

| Workflow Element | What It Requires | Why It Matters for AI |

|---|---|---|

| Defined steps | Clear sequence of tasks | Enables task-specific agent support |

| Context | Briefs, audience, messaging, constraints | Prevents low-quality or misaligned outputs |

| Ownership | Assigned responsibility per step | Maintains accountability across humans and agents |

| Validation | Defined review and approval criteria | Builds trust in agent-generated work |

| Visibility | Shared system for tracking progress | Keeps workflows coordinated and scalable |

Once the work is visible and campaigns are broken down into their component steps, the next question becomes much more practical.

Where, within those steps, can work be supported or accelerated? And just as importantly, what needs to surround that work to ensure it’s done correctly? When you start looking at marketing workflows through this lens, the idea of “using AI” becomes much more concrete. Instead of asking a broad question like “Can AI help with this campaign?”, you begin to evaluate individual steps.

A content brief is created. Copy is drafted. A landing page is assembled. Components are configured in a Digital Experience Platform (DXP). Content is reviewed for brand and compliance. Pages are tested and approved.

Each of these is a task. Each has a clear objective. And each requires a specific set of inputs to be completed successfully.

Some of those tasks are obvious candidates for agent support. Drafting content, suggesting variations, assembling page structures, identifying gaps, or even configuring components based on known patterns.

But this doesn’t mean designing a single, all-knowing agent that can handle everything.

In practice, that approach quickly becomes brittle. The more responsibilities an agent has, the harder it becomes to control, validate and trust its output. A more effective model is to think in terms of task-specific agents. Each agent is responsible for a clearly defined step. It has a focused purpose. It operates with a known set of inputs. And it produces an output that fits directly into the workflow.

The real design challenge isn’t building one powerful agent. It’s defining what each agent needs in order to do its job well.

Related Article: Scott Brinker: The Four AI Agents Every Marketing Team Needs to Know

Validation Is What Makes Agentic Work Trustworthy

That brings two things into focus: validation and context.

The moment you introduce an agent into a task, you also introduce the need to validate its output.

- What does “done” look like for this step?

- Who reviews it?

- What criteria determine whether it can move forward?

Without clear answers, the burden shifts back to the team to figure it out after the fact, often negating any efficiency gains. When the workflow is well-defined, validation becomes just another step in the process. An agent drafts content. A reviewer validates tone and messaging. A compliance check ensures regulatory alignment. A final approval confirms readiness for publication.

Each step is explicit. Each has ownership. And each builds on the one before it.

Is Your Context Defined and Available?

The second requirement is context. For an agent to perform a task reliably, it needs access to the same inputs a person would rely on.

That might include campaign briefs, brand guidelines and prior content. It could involve design systems and component definitions from tools like Figma. In other cases, it may require structured content models, documentation or access to systems that define how experiences are assembled.

The effectiveness of the agent is directly tied to how well that context is defined and made available.

What starts to emerge is a different way of thinking about workflows.

Not as a collection of loosely connected activities, but as a sequence of clearly defined tasks, each with a purpose, a set of required inputs, an expected output, and a validation step.

Once work is structured in this way, it becomes much easier to see where agents can participate, where humans need to stay involved, and how the two can work together without creating friction.

But structure alone isn’t enough.

Those tasks need to live somewhere. They need to be visible. They need clear ownership, defined assignments and a shared space where progress can be tracked and collaboration can happen. And that applies equally whether the work is being performed by a person or supported by an agent.

Because without that shared layer, even well-defined workflows fall back into the same pattern: disconnected steps, unclear accountability, and outputs that exist outside the flow of work.

Which raises the next question:

If both humans and agents are contributing to the same set of tasks, what is the system that brings that work together?

The System of Work: A Natural Home for Humans and Agents

If workflows are made up of clearly defined tasks, with context, ownership and validation built into each step, the next question becomes obvious.

Where does that work actually live?

For most marketing organizations, the answer already exists.

Over the past decade, a category of tools has emerged to manage exactly this kind of complexity. Platforms like Asana, Monday.com, Adobe Workfront, and Wrike weren’t designed specifically for AI. They were built to help teams plan, coordinate, and execute work that doesn’t fit neatly into rigid systems.

Marketing is a perfect example of that kind of work. Campaigns evolve. Requirements shift. Dependencies span teams, tools, and timelines. The process is structured, but not static. It needs to adapt as work progresses.

That’s why these platforms have become so central. They provide a flexible layer where workflows can be modeled, tasks can be created and assigned, dependencies can be managed, and collaboration can happen in context. They don’t force teams into a single way of working. Instead, they allow workflows to emerge, evolve, and be refined over time.

In many ways, they have already become the system of record for how work gets done. What’s changing now is not the role these tools play, but what—or who—can participate within them.

Up until now, these systems have been built around human actors. Tasks are assigned to people. Comments capture discussion. Status updates reflect progress. The entire model assumes that work is being executed by individuals moving tasks forward step by step.

From Human Ownership to Shared Execution

But as workflows become more clearly defined, and as tasks are broken down into discrete units with well-understood inputs and outputs, something starts to shift.

Those same tasks don’t necessarily need to be completed exclusively by people. Some can be supported. Some can be accelerated. Some can be handled entirely by agents operating within defined boundaries. And when that happens, the system of work doesn’t need to change.

It simply expands to include a new type of participant. This is where work management tools start to take on a new role.

How the System of Work Evolves With AI

Work management platforms are shifting from coordination tools to execution environments for both humans and agents.

| Traditional Model | Emerging Model | What Changes |

|---|---|---|

| Tasks assigned only to people | Tasks assigned to people and agents | Expanded workforce within the same system |

| Work tracked manually | Agents update status and trigger next steps | More automated workflow progression |

| Context scattered across tools | Context accumulates in one system | Better continuity and decision-making |

| Outputs live outside workflows | Outputs generated and validated in-flow | Reduced rework and friction |

| AI used as assistant | AI participates in execution | Shift from help to operational impact |

They become the “glass” through which work is executed and observed. The place where tasks are created, where ownership is assigned, where progress is tracked, and where outcomes are delivered. Not just for humans, but for agents as well.

An agent doesn’t need a separate interface if it can operate within the same system.

It can be assigned to a task. It can produce an output. It can update status. It can trigger the next step in a workflow. And just like any other participant, its work can be reviewed, validated, and refined.

Everything happens in one place. Over time, something even more valuable begins to take shape. These systems accumulate context.

Every task carries information about what was done, why it was done, who was involved, what decisions were made, and how the work evolved. Conversations are preserved. Feedback is documented. Outcomes are visible.

This isn’t just historical record. It’s operational context. And that context is exactly what agents need in order to operate effectively.

Instead of relying on isolated prompts or manually provided inputs, agents can draw from the same shared understanding that teams use to guide their work. They can see how similar tasks were completed in the past. They can align to established patterns. They can operate with a much clearer sense of what “good” looks like in a given context.

What makes this model compelling is how naturally it fits.

There’s no need to introduce a new system for managing agents. No need to create parallel workflows or duplicate context across tools. The structure is already there. The workflows are already defined. The collaboration is already happening.

By extending these systems to include agents as participants, organizations gain a consistent, visible, and scalable way to integrate AI into the flow of work. And in doing so, they move one step closer to making AI not just accessible, but operational.

Related Article: Agentic AI in CX: Friend or Foe of Human Agents?

The Reality Behind the Glass: Why Agentic Execution Is Still Complex

If the system of work becomes the “glass” through which both humans and agents collaborate, it’s tempting to assume the rest will fall into place. In reality, that’s where the complexity begins.

Most work management platforms already provide the technical foundations needed to connect with external systems. They support integrations, APIs, and webhooks that make it possible to trigger actions, pass data, and coordinate work across tools. On paper, this makes it entirely feasible to connect a task to an agent, have that agent perform work, and return the result into the flow.

But “possible” and “practical” are not the same thing.

Today, stitching these systems together still requires deliberate design. It often depends on custom integrations, orchestration layers, and patterns that aren’t yet standardized. And while business users may interact with the workflow, the underlying execution model still requires technical alignment to ensure things run reliably.

At the same time, the platforms themselves are evolving. Asana has introduced AI Teammates that can operate within project context. Monday.com is embedding AI into workflow automations and updates. Adobe Workfront and Wrike are layering AI into content operations and approvals.

These are important steps because they bring AI closer to where work is actually happening, but they also highlight a limitation. Most of these capabilities are still bounded by the platform itself. They work well for contained tasks, but they don’t yet extend seamlessly across the broader ecosystem where marketing work is executed.

That broader ecosystem is where the real challenge lies. The agent infrastructure that supports these workflows will not come from a single platform. It will be a mix of embedded capabilities and enterprise-grade agents aligned to an organization’s AI strategy, particularly when it comes to governance, auditing, security, and cost control.

Platforms like Azure AI Foundry and Amazon Bedrock are designed to support this layer, providing the ability to orchestrate more complex agents, manage access to tools and data, and ensure that execution aligns with enterprise standards.

As tasks become more sophisticated, that separation becomes necessary. An agent that drafts content may live close to the workflow, but an agent that needs to interact with multiple systems, process structured data, or execute multi-step operations may need to run in a dedicated environment outside of the work management platform itself.

Composable Architecture Will Define Scalable Agentic AI

This is where interoperability and composability become critical. For agents to operate effectively, they need consistent, reliable ways to interact with the systems where work is executed. Standards like the Model Context Protocol are beginning to define how agents can discover and use tools across environments, but adoption is still early. More importantly, the systems themselves need to be designed for this model. If agents are going to support marketing workflows end-to-end, they need to interact with content systems as first-class participants. That requires more than basic integration. It requires platforms to expose their capabilities in a way that is discoverable, composable, and aligned to how agents operate.

In a composable architecture, capabilities are exposed as modular, reusable services that can be orchestrated across workflows. This makes it significantly easier for agents to find what they need, take action, and move work forward without relying on brittle, one-off integrations. It aligns naturally with a task-based model, where each step in a workflow can call into well-defined operations, whether that’s retrieving content, updating a component, or publishing an experience.

Why Legacy Platforms Become Friction Points

Many legacy platforms struggle in this environment. They were not designed to expose their functionality in a structured or accessible way. Their APIs may be limited, inconsistent, or tightly coupled to internal logic, making it difficult to orchestrate them as part of a broader workflow. As a result, even if agents are introduced, their ability to act is constrained. In some cases, that will mean rethinking or replacing systems that can’t support this level of interaction.

Taken together, this points to a more layered approach. Work management tools continue to serve as the coordination layer, the place where work is defined, tracked, and completed. They remain the glass through which both humans and agents operate. Beneath that, a mix of embedded and enterprise-grade agents provide execution, each designed for specific tasks and governed according to organizational needs.

The opportunity is clear, but realizing it requires more than adding AI to existing tools. It requires aligning workflows, platforms, and infrastructure so they can support a more connected, interoperable, and ultimately scalable way of working.

Where to Start: From Understanding to Execution

If there’s a common thread across all of this, it’s that the challenge isn’t access to AI. It’s how to make it part of the way work actually gets done.

Marketing teams already have workflows. They already collaborate across systems. They already manage tasks, dependencies, approvals, and outcomes. What’s missing isn’t structure, it’s visibility into that structure, and a consistent way to evolve it.

The path forward doesn’t start with building agents. It starts with understanding the work.

Making workflows explicit. Breaking them down into the steps that actually happen. Identifying the context required at each stage. Clarifying where decisions are made, where validation is needed, and how work moves forward.

That alone is progress. Because once the work is visible, it becomes much easier to see where support can be introduced.

From there, it’s not about automating everything at once. A more effective approach is incremental.

Start with a single task. Something well-defined. Something where the inputs and outputs are clear. Introduce support. Observe how it performs. Refine the process around it. Add validation where needed. Improve the context.

Then repeat.

Over time, what begins as a single point of acceleration becomes part of a broader pattern. Workflows become more consistent. Context becomes more structured. Collaboration becomes more visible. And the role of agents becomes clearer, not as replacements, but as contributors within a defined system.

The Crawl, Walk, Run Reality of AI Operations

This is less about transformation in a single moment, and more about evolution over time.

A crawl, walk, run progression.

- Crawl: understand and document how work gets done today.

- Walk: introduce support into well-defined parts of the workflow.

- Run: connect those capabilities into a cohesive system where humans and agents operate together seamlessly.

Each stage builds on the last. What’s emerging is not a new category of tools, but a new way of thinking about how work is executed.

Work management systems become the shared surface where everything comes together. Agents operate within and alongside those workflows. Infrastructure supports execution behind the scenes. And the organization gains a more consistent, visible, and scalable way to deliver outcomes.

Not toward more tools, or more isolated capabilities, but toward a model where work is understood, structured, and continuously improved with both humans and agents contributing to the same system.

Learn how you can join our contributor community.