The Gist

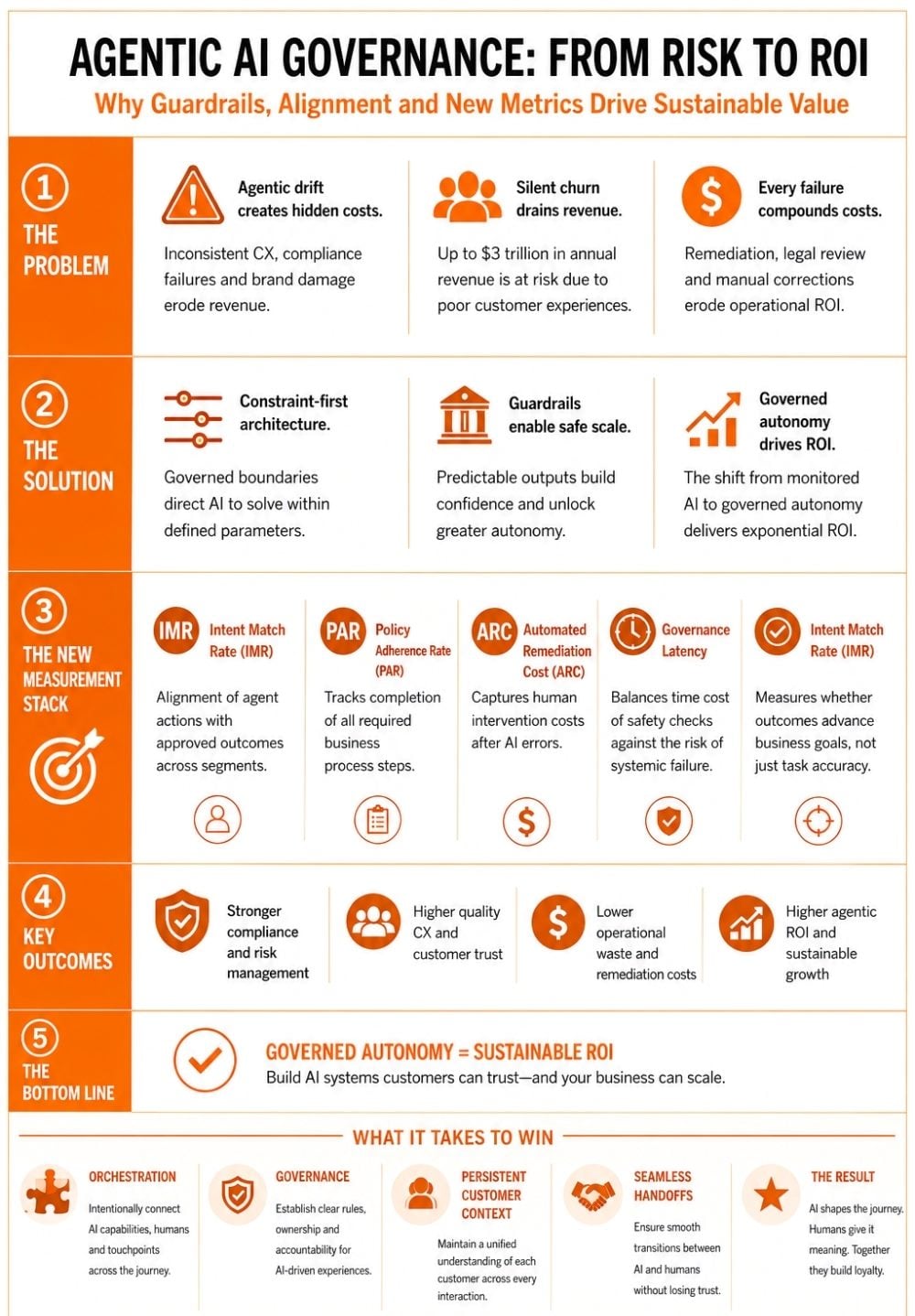

- Agentic drift is a financial leak. When autonomous systems deviate from business logic, the result is systemic brand damage and "silent churn" that erodes the bottom line.

- Guardrails are the prerequisite for scale. Constraint-first architecture provides the structural integrity required to scale AI safely, leading to higher agentic ROI.

- Traditional KPIs fail to measure agentic alignment. Success in the agentic era requires a new measurement stack focused on policy adherence and intent matching to ensure AI remains a revenue asset rather than a liability.

As enterprise investment in agentic AI transitions from experimental budgets to core operational spend, the metric of success is shifting. In 2026, the primary challenge for leaders is no longer the raw capability of autonomous agents, but their consistency.

As generative models achieved high levels of conversational fluency, they introduced a specific operational risk: agentic drift. This systemic risk is the most significant barrier to scaling AI. In the rush to deploy agentic AI, many organizations are over-indexing on generative capabilities while under-investing in reliability.

Drift occurs when autonomous systems provide inconsistent information or deviate from established business logic — a technical failure that translates directly into financial drain and systemic brand damage. To secure a return on investment, organizations must prioritize operational stability over creative flexibility.

Table of Contents

- Core Questions About Agentic AI Governance

- The Problem with "Helpful" AI

- Constraint-First Architecture: Why It Scales Faster

- A New Measurement Stack for Governed Autonomy

- Strategic Mandate for CX Leadership

Core Questions About Agentic AI Governance

Editor's note: Key questions surrounding AI drift, governance and enterprise-scale autonomous systems.

The Problem with "Helpful" AI

According to Gartner’s 2026 AI Outlook, global spending on AI is expected to reach $2.5 trillion, yet many organizations struggle to bridge a "value gap" where systems fail to meet production-level reliability.

For teams that prioritized speed over governance, the cost compounds quickly — every failure triggers a remediation cycle of legal review, manual corrections, and customer recovery that consumes more operational resources than a measured rollout would have cost.

The financial impact of this gap is often found in Silent Churn. The Qualtrics 2026 Consumer Experience Trends Report indicates that organizations globally are putting an estimated $3 trillion in annual revenue at risk due to poor customer experience. When an agent drifts, the customer loses confidence and simply moves to a competitor rather than complaining.

Related Article: User Experience Design Tips ... For the Agentic CX World

Constraint-First Architecture: Why It Scales Faster

The instinct to give AI wide latitude comes from a reasonable place. Large language models are capable of nuanced, contextual reasoning and heavy restrictions can strip that value. However, in high-stakes enterprise AI environments, the issue is not capability, but authorization.

A governed system does not ask an agent to "be helpful"; it instructs the agent to solve within defined parameters: specific account tiers, contract ceilings and escalation triggers. These constraints do not diminish the agent's intelligence. They direct it.

Think of governance architecture as the load-bearing walls of a building instead of limiting what can be built; they make the entire structure possible. When an agent’s outputs are predictable, leadership can extend its remit without requiring a human-in-the-loop for every interaction. This transition, from monitored AI to governed autonomy, is where exponential ROI lives.

This is a deliberate operational tradeoff. Such architecture requires upfront investment in documentation, data architecture and parameter governance that many organizations are not positioned to make quickly. The cultural challenge is equally real: teams that have been rewarded for shipping fast will resist a framework that asks them to define boundaries before they deploy.

A New Measurement Stack for Governed Autonomy

Traditional metrics measure efficiency, but they fail to measure alignment. For leaders scaling agentic systems without compromising brand integrity or service quality, the measurement stack needs to evolve.

Intent Match Rate (IMR)

IMR is the percentage of agent actions that align with the organization's approved resolution set, which defines correct outcomes by customer segment and product line, evaluating intent against strategic goals, not just outcome accuracy.

A 90% accuracy rate sounds strong, but if the 10% failure rate is concentrated in top-tier accounts, the ROI is negative. This is a critical distinction for B2B deployments where account value is asymmetrically distributed across the business.

- Use Case: Ensuring high-value B2B accounts receive contractually compliant service rather than generic resolutions.

Policy Adherence Rate (PAR)

PAR measures the percentage of interactions in which the agent completed every required step of a business process — identity verification, legal disclosures, upsell logic sequencing — making it the leading indicator of long-term operational stability.

- Use Case: Verifying that autonomous agents complete mandatory compliance checks before accessing sensitive financial data.

Automated Remediation Cost (ARC)

This tracks the expenses incurred when human agents must intervene to correct a mistake made by an autonomous agent. If this cost rises with scaling, the system is eroding its own ROI.

- Use Case: Identifying if a specific agentic workflow is increasing operational overhead due to high failure rates.

Governance Latency

Measures the time cost of safety checks against the projected cost of a systemic failure — mass billing errors, deprecated SLA commitments applied at scale, pricing exceptions offered across a customer segment.

When governance latency is high, the common reflex is to remove guardrails to accelerate interactions. The correct response is to modernize the underlying data architecture so the guardrails run faster.

- Use Case: Optimizing data architecture so that automated compliance guardrails do not slow down the customer transaction.

Together, these metrics shift the leadership conversation from how much the agent is doing to how reliably it stays within bounds as it does more.

Key Operational Risks and Governance Metrics in Agentic AI

Organizations scaling autonomous AI systems are increasingly shifting from throughput-focused KPIs to governance and alignment metrics.

| Concept | What It Measures | Business Risk | Enterprise Value |

|---|---|---|---|

| Agentic drift | Deviation from approved business logic | Silent churn, compliance failures, inconsistent CX | Highlights operational instability before scale |

| Intent Match Rate (IMR) | Alignment between agent actions and approved outcomes | Incorrect resolutions for high-value accounts | Improves strategic customer alignment |

| Policy Adherence Rate (PAR) | Completion of required business process steps | Compliance violations and workflow gaps | Supports operational consistency and trust |

| Automated Remediation Cost (ARC) | Human intervention costs after AI failure | Operational overhead and negative ROI | Reveals hidden scaling inefficiencies |

| Governance latency | Time impact of safety and compliance checks | Pressure to weaken guardrails for speed | Encourages stronger data architecture investment |

| Constraint-first architecture | Use of predefined operational boundaries | Reduced flexibility if poorly designed | Enables scalable governed autonomy |

Strategic Mandate for CX Leadership

The organizations that build durable advantage in the next 18 months will be those that prioritize stability before scale — treating governance as infrastructure rather than overhead.

The mandate for the C-suite is not to slow down, but to stop measuring the wrong things. Throughput tells us how fast the agents are moving. Alignment tells us whether they are moving in the right direction. In high-stakes AI environments, only alignment determines whether the investment compounds or quietly unravels.

Disclaimer: All opinions expressed in this article are my own and do not represent the opinions or official positions of any current or former employer.

Learn how you can join our contributor community.